library(mvtnorm) # to sample from multivariate Gaussian

n=500 # number of individuals

fw <- 5 # follow-up duration

b_0=1 # intercept

b_1=-1 # slope

b_2=1 # trt

b_3=0.5 # trt x slope

b_e=0.5 # residual error

mestime=seq(0, fw, 1) # measurement times

n_i <- length(mestime)

time=rep(mestime, n) # time column

id<-rep(1:n, each=n_i) # individual id

b1SD=1 # random intercept standard deviation

b2SD=0.7 # random slope standard deviation

cor_bintslo=0.6 # correlation

cov_bintslo <- b1SD*b2SD*cor_bintslo # covariance

Sigma=matrix(c(b1SD^2, cov_bintslo, # variance-covariance matrix

cov_bintslo, b2SD^2), ncol=2)

MVnorm <- rmvnorm(n, rep(0, 2), Sigma) # sample from multivariate normal

b1 <- rep(MVnorm[,1], each=n_i) # random intercepts

b2 <- rep(MVnorm[,2], each=n_i) # random slopes

trt <- rbinom(n, 1, 0.5) # binary trt covariate

trtY <- rep(trt, each=length(mestime))

linPred <- b_0 + b1 + (b_1 + b2)*time + b_2*trtY + b_3*trtY*time

Y <- rnorm(n_i*n, linPred, b_e) # observed outcome

datJM_Lt <- data.frame(id, time, Y, trt=trtY)

baseScale <- 0.1 # baseline hazard scale parameter (exponential)

s_1 <- 0.5 # random intercept effect on survival

s_2 <- 0.3 # random slope effect on survival

u <- runif(n) # uniform distribution for survival times generation

eventTimes <- -(log(u)/(baseScale*exp(MVnorm[,1]*s_1 + MVnorm[,2]*s_2)))

eventIndic <- as.numeric(eventTimes<fw) # eventTimes indicator

## censoring individuals at end of follow-up

eventTimes <- pmin(eventTimes, fw)

datJM_S <- data.frame(id=1:n, eventTimes, eventIndic, trt) # survival data

## removing longi measurements after death

datJM_L <- datJM_Lt[-unlist(sapply(1:n,

function(x) which(datJM_Lt$time>=

datJM_S$eventTimes[datJM_S$id==x] &

datJM_Lt$id==x))),]4 Joint modeling of longitudinal and survival data

The scientific needs in modern medicine and epidemiology has evolved away from paradigms centered on single endpoints and simple cause-and-effect relationships toward a more holistic understanding of disease as a complex, dynamic system. This evolution is driven by a parallel revolution in data acquisition, where longitudinal cohort studies now generate high-frequency, high-dimensional data streams for each individual, including many biomarkers, genomic data, patient-reported outcomes, and event histories. This amount of data presents an opportunity to model the dynamics of diseases, but it also exposes the limitations of traditional statistical methodologies.

While an association between a longitudinal marker and an event of interest might be related to other covariates, joint modeling allows to include these covariates in order to remove their effect and study the residual link not related to observed covariates. For example, studying the relationship between smoking status and the risk of lung cancer. This association is known to be strong but could result from a confounder that increases both the risk of smoking and lung cancer, such as socioeconomic status. With a joint model, we can include a covariate that reflects socioeconomic status in both the model for smoking status over time, and the risk of lung cancer.

The development of joint modeling of a single longitudinal marker and a single survival outcome, has been a major methodological breakthrough. This model provided a robust framework to address informative censoring and to improve statistical efficiency. Joint modeling has been successfully applied across a wide range of medical and epidemiological fields to answer complex clinical questions such as linking longitudinal CD4 counts to the time to AIDS or death (Wulfsohn & Tsiatis (1997)) or modeling Prostate-Specific Antigen dynamics to predict cancer recurrence (Proust-Lima & Taylor (2009), Ferrer et al. (2016)), where joint models have demonstrated their superiority over simplified approaches. Some additional examples include quality of life and cancer survival (Song et al. (2016), Z. Li et al. (2013)), tumor lesions dynamics and cancer survival (Rustand et al. (2020), Rustand et al. (2023), Rustand, Briollais, et al. (2024), Kerioui et al. (2023)), repeated measurements of lipid and coronary heart disease (Kassahun-Yimer et al. (2020)), multiple longitudinal biomarkers related to heart failure (Field et al. (2023)), repeated echocardiographic measures and the risk of neoaortic valve regurgitation in patients after heart surgery (Baart et al. (2021)), longitudinal markers related to graft function and survival in patients with chronic kidney disease that received renal transplantation (Lawrence Gould et al. (2015)), aortic gradient dynamics and cardiac surgery outcomes (Andrinopoulou et al. (2014)), cognitive decline markers and the onset of Alzheimer’s disease (Dantan et al. (2011), Van Eijk et al. (2022)) or body mass index repeated measurements and the risk of knee failure in rheumatology (Arbeeva et al. (2020)).

However, as the complexity of biomedical data has continued to grow, the scientific need for more sophisticated models capable of handling multiple longitudinal markers and multiple survival outcomes simultaneously has become common. The primary limitation to the widespread adoption of these advanced multivariate models has not been a lack of statistical theory, but rather a computational bottleneck. Conventional estimation methods, particularly Bayesian approaches relying on Markov Chain Monte Carlo (MCMC) sampling, are notoriously slow and computationally expensive for the highly parameterized hierarchical models required for multivariate joint analysis. As documented in this book’s preface and demonstrated in previous works mentioned in Chapter 1, fitting such models can take many hours or even days and sometimes fail to converge. This computational barrier has often constrained researchers to find a pragmatic but scientifically unsatisfying compromise: choosing simpler, less realistic models based on computational feasibility rather than scientific appropriateness.

The framework detailed in this chapter, and implemented within the INLAjoint package, pushes these limitations and allows for more complex models. For instance in cancer clinical research, one can now investigate how the correlated trajectories of tumor size repeated measurements, circulating tumor DNA, and patient-reported quality-of-life collectively predict a patient’s transition through the states of remission, recurrence, and death. Similarly, it becomes possible to dynamically predict the competing risks of myocardial infarction versus stroke based on the simultaneous evolution of a patient’s complete cardiometabolic profile, including their lipid markers, inflammatory markers, and echocardiographic measurements. When studying aging cohorts, it is now possible to integrate multidimensional data from an entire panel of repeatedly measured markers such as cognitive scores and functional assessments in order to jointly predict an individual’s progression through the states of healthy aging, mild cognitive impairment and dementia, all while accounting for the competing risk of death. These are illustrative examples of the new class of scientific questions that this computationally efficient framework allows us to address. By enabling the rapid and robust estimation of these complex models, this methodology serves a dual purpose: it provides a powerful inferential tool to analyze the complex pathways of a disease and opens a new frontier in personalized medicine through dynamic, real-time risk prediction.

4.1 Rationale and overview of joint models

The simultaneous consideration of longitudinal and survival data in the context of joint models represents both a challenge and an opportunity. Indeed, when examining repeated measurements of a biomarker alongside an event of interest, an inherent association between these two outcomes often exists. The risk of the event can be influenced by the longitudinal biomarker, with biomarker measurements typically being truncated by the occurrence of the event. Joint models, integrating longitudinal and survival components, have become indispensable for capturing the relationship between time-varying endogenous variables and survival outcomes.

Endogenous longitudinal markers are variables whose values or future trajectory directly correlates with event status (see Section 2.4). Using time-dependent Cox models (Section 2.4) is inadequate for endogenous markers due to their inability to appropriately handle the inherent characteristics of these variables, such as the direct relationship with event status. Moreover both endogenous and exogenous time-dependent covariates are susceptible to measurement error and missing data due to discontinuous measurement times, which can be handled through joint modeling. Joint modeling emerges as a suitable and accurate alternative in such cases, facilitating a comprehensive understanding of the complex relationship between covariates and event outcomes. They enhance statistical inference efficiency by simultaneously utilizing both longitudinal biomarker measurements and survival times (Faucett & Thomas (1996), Hogan & Laird (1997)).

Fitting multiple regression models simultaneously and accounting for a possibly time-dependent association is computationally challenging. Two-stage approaches were initially proposed (De Gruttola & Tu (1994), Tsiatis et al. (1995)), involving fitting the longitudinal model first and include its expected value in a survival regression model in a second stage. However, these models suffer from important limitations, either because they overlook informative drop-out due to survival events or because they require to fit many longitudinal models (i.e., at each event time), leading to an infeasible computational burden and unrealistic assumptions on the distribution of random effects (Wulfsohn & Tsiatis (1997)). The simultaneous fit of the multiple components of a joint model was introduced a couple years later (Faucett & Thomas (1996), Henderson et al. (2000)), mitigating some bias observed with two-stage approaches and enhancing efficiency of statistical analyses by leveraging information from both data sources simultaneously, although long computation times were necessary for these types of models.

Recent advances in computing resources and statistical software have enabled the estimation of various joint models, but despite the interest in analyzing more than a single longitudinal outcome and a single survival outcome concurrently, most existing inference techniques and statistical software have limitations on such comprehensive joint models because of the computational challenge as stated in the literature (Huang et al. (2012), Hickey et al. (2016), N. Li et al. (2021), Rustand, Van Niekerk, et al. (2024)). Indeed, common estimation strategies such as Newton-Raphson, expectation-maximization (EM) or MCMC face limitations in scalability and convergence speed, particularly for complex data and models (i.e., many outcomes or parameters). In addressing this concern, the INLA methodology and the R packages R-INLA and INLAjoint emerges as a solution designed to address the need for a reliable and efficient estimation strategy for joint models.

With a flexible architecture, INLAjoint allows statisticians and researchers to construct complex regression models, treating univariate regression models as modular building blocks that can be assembled to effortlessly form complex joint models. INLA capitalizes on the sparse representations of high dimensional matrices to provide rapid approximations of exact inference. It has been recognized as a fast and reliable alternative to MCMC for Bayesian inference of joint models, with the capability to handle the increasing complexity inherent in joint models (Rustand, Van Niekerk, et al. (2024), Rustand et al. (2023)).

The standard joint model often employs a shared combination of fixed and random effects to analyze longitudinal outcomes and associated survival outcomes. An alternative is the latent class joint model (Proust-Lima et al. (2014)), which assumes the population comprises homogeneous groups with similar biomarker trajectories and event risks, this is not addressed in this book and we focus on the shared random effect joint models which involve a higher computational burden and thus benefit more from the INLA estimation, although latent class joint models could be developed within the INLA framework in the future.

While many methods implemented in different software were proposed to fit joint models with a single longitudinal and a single survival outcome, the available software for multivariate joint models is more limited. Here, we focus on methods that can (at least in theory, in practice many of these methods often fail to converge for joint models) deal with multiple longitudinal and/or multiple survival outcomes. In SAS (SAS Institute Inc. (2003)), the procedure NLMIXED has been widely used to fit various joint models (Guo & Carlin (2004), Zhang et al. (2014)) but it has poor scaling properties due to the curse of dimensionality of the Gauss-Hermite quadrature method used to do an analytical approximation of the integral over the random effects density in the likelihood of joint models. This multivariate integral is the main reason why joint models are computationally expensive.

In Stata, the merlin command has been proposed to provide a unified framework able to fit various joint models (Crowther (2020)) but it suffers from convergence problems and long computation time (Medina-Olivares et al. (2023)). In R (R Core Team (2025)), many packages have been introduced to fit various joint models, the most well-known being JM (Rizopoulos (2010)) which is limited to a single longitudinal outcome but can accommodate competing risks of event, a pseudo-adaptive Gaussian quadrature is used to integrate out random effects in the likelihood, which has better scaling properties compared to the standard quadrature but remains limited. The JMcmprsk package (H. Wang et al. (2021)) provides an implementation of joint models for continuous or ordinal longitudinal outcomes and competing risks data, using an EM algorithm for estimation.

The R package joineRML (Hickey et al. (2018)) uses a Monte Carlo expectation-maximization algorithm and can include multiple longitudinal outcomes but is limited to Gaussian distributions for the longitudinal markers and a single survival outcome. The R package gmvjoint (Murray & Philipson (2022)) also uses a frequentist approach based on an approximate Expectation-Maximization algorithm to fit joint models for multivariate longitudinal outcomes of various types and a survival outcome that can include competing risks or multi-state processes. The R package frailtypack (Rondeau et al. (2012)) can handle the simultaneous inclusion of recurrent events modeled with a shared frailty survival model and a terminal event along with a single longitudinal outcome using the Levenberg-Marquardt algorithm (i.e., a Newton-like algorithm). More recently, the R package FastJM (S. Li et al. (2025)) was introduced as an efficient implementation of a semiparametric joint model for multiple longitudinal biomarkers and competing risks, using a normal approximation and linear scan algorithms within an EM framework to improve scalability for large datasets.

While the aforementioned software packages are based on a frequentist framework, Bayesian inference has gained a lot of interest recently in the context of joint models. Simulation studies (Rustand, Van Niekerk, et al. (2024), Rustand et al. (2023)) for joint models show that frequentist statistics computed over joint models fitted with Bayesian inference, can be as good if not better compared to those obtained with frequentist inference (i.e., when data is not informative for the value of a parameter, frequentist inference will fail while Bayesian inference will return information from the prior, i.e., explicitly showing that the data is not informative for this parameter). Bayesian inference usually rely on sampling-based algorithms such as MCMC, resulting in a significant computational burden.

The R package JMbayes (Rizopoulos (2016)) is the “Bayesian version” of JM using MCMC to fit joint models, it can handle multiple longitudinal outcomes of different types and also multiple survival submodels such as competing risks or multi-state. It is not fully Bayesian as it relies on a corrected two-stage approach. More recently, the R package JMbayes2 (Rizopoulos et al. (2025)) has been proposed using a full Bayesian approach with MCMC, it can also handle multiple longitudinal outcomes of different types, competing risks and multi-state models as well as frailty models for recurrent events. It has better scaling properties compared to JMbayes as it uses parallel computations over chains and an efficient MCMC implementation in C++.

Finally, the R package rstanarm (Goodrich et al. (2020)) can deal with up to three longitudinal outcomes of different nature (at the moment) but is limited to a single survival outcome and it relies on the Hamiltonian Monte Carlo algorithm as implemented in Stan (Carpenter et al. (2017)), which has the expected slow convergence properties for joint models (Rustand, Van Niekerk, et al. (2024)).

All these softwares, while capable of fitting joint models, cannot be pictured as direct competitors but rather as benchmarks against which the flexibility of INLAjoint can be highlighted. Comparisons of some of these approaches with the INLA methodology have already been proposed in the literature. Rustand et al. (2023) compared the R packages R-INLA and frailtypack in simulation studies to fit a joint model for a semicontinuous longitudinal outcome and a terminal event in the context of cancer clinical trial evaluation and found that INLA was superior to frailtypack in terms of computation time and precision of the fixed effects estimation. The frequentist estimation faced some limitations and led to convergence issues when fitting a complex joint model.

Rustand, Van Niekerk, et al. (2024) compared INLAjoint with joineRML, JMbayes2 and rstanarm in multiple simulation studies involving up to three longitudinal markers along with a terminal event. It showed that INLA is reliable and faster than alternative estimation strategies, has very good inference properties and no convergence issues. However, while the R package R-INLA that implements the INLA methodology has been previously introduced to fit joint models (Van Niekerk et al. (2021)), the increasing complexity related to the inclusion of multivariate outcomes makes it cumbersome to use this package directly and motivated the development of the R package INLAjoint as a user-friendly implementation specifically for joint models.

As an illustration, the joint model fitted in the application of Rustand, Van Niekerk, et al. (2024), including 7 regression models for 5 longitudinal markers and 2 competing risks of event, was initially fitted with R-INLA and required more than 1000 lines of code while fitting the exact same model with INLAjoint requires 15 lines of code. This new package makes the use of INLA for joint modeling more user friendly and widely applicable, while it prevents some common mistakes in the code that can have important implications. INLAjoint uses a more friendly syntax than the R package R-INLA both for fitting joint models and in the output summary, moreover it offers more extensive post-processing tools appropriate for joint models compared to R-INLA such as specific plots and dynamic predictions as highlighted in the rest of this chapter.

4.2 Joint models as latent Gaussian models

Joint modeling involves fitting multiple longitudinal and/or survival outcomes simultaneously. When fitting multiple longitudinal outcomes, the outcome-specific mixed-effects regression models can be linked through the correlation of their random effects (see Section 3.4). On the other hand, when fitting longitudinal and survival outcomes or multiple survival outcomes, the models can be linked by sharing a linear combination of fixed and random effects from a submodel with another one (see Section 2.11). In both cases, the contribution to the likelihood of each submodel is independent conditional on these correlated or shared effects. The likelihood of a joint model then corresponds to the integral over the random effects density of the product of each submodel’s likelihood, where a survival model’s likelihood is defined as the product of individual likelihoods while a longitudinal model’s likelihood is the product of individual likelihoods across measurement occasions.

The main challenge in fitting joint models is the multivariate integral over the density of random effects in the likelihood function, which is usually handled by numerical approximation such as Monte Carlo sampling or Gauss quadrature methods which are time-consuming. In the Bayesian framework, the fixed and random effects are treated jointly as part of the parameters to be estimated. When Gaussian priors are assumed for all fixed and random effects, the fixed and random effects form a Gaussian latent field. In INLA, the fixed effects are given independent Gaussian prior distributions with zero mean and fixed variance while the Gaussian prior for each random effect are specified using sparse precision matrices, which gives a joint sparse precision matrix for the latent field (all the fixed and random effects).

Moreover, a second challenge is the association structure that links submodels for different outcomes. While correlated random effects are time invariant, it is common to share time-dependent components such as the individual deviation from the population mean as defined by random effects (shared random effects parametrization), the entire linear predictor (current value or current level parametrization) or the derivative over time of the linear predictor (current slope parametrization). These time-dependent associations complexify the contribution to the likelihood of survival models as the usual approach involves an analytical solution for the contribution to the likelihood of survival models because the likelihood contribution of observed and censored events involve the survival of the individual up to the event or censoring time. The survival function is based on the cumulative risk (i.e., the integral of the risk function), thus when a time-dependent component is included in the risk function, this integral needs to take into account the evolution of the time-dependent component during follow-up to compute the contribution to the likelihood. While other software deal with this additional integral with sampling or numerical approximation methods such as Gauss quadrature, INLA takes advantage of the decomposition of the follow-up into small intervals to account for the evolution of time-dependent components in the risk function as described in Section 2.2.3 and Section 2.4, which again avoids the need for time-consuming numerical approximation techniques and fits within the LGM framework.

This is also particularly useful for prediction purpose, where we can compute the risk at any time point conditional on the time-dependent components. While it sounds time-consuming to increase the data size for model fitting, the INLA method is specifically designed to take advantage of the latent Gaussian model structure, and the additional cost in speed of this technique is minimal compared to the use of numerical approximation of integrals or sampling. Overall, integrals in the likelihood are handled as conditional probabilities, directly resulting from Bayes theorem. More details on the formulation of joint models as LGMs are available in Martino et al. (2011), Van Niekerk et al. (2023), Van Niekerk et al. (2021) and Rustand, Niekerk, et al. (2024).

4.3 Joint model for one longitudinal and one survival outcome

Let’s consider the mixed-effects model for longitudinal data presented in Section 3.2.4, which include some fixed effects \(\beta\), a random intercept \(b_{i0}\) and a random slope of time \(b_{i1}\): \[Y_{i}(t)=\eta_{i}(t) = \beta_0 + b_{i0} + (\beta_1 + b_{i1})\cdot t + \beta_2\cdot trt_i + \beta_3\cdot trt_i\cdot t +\varepsilon,\] \[b_i|\Sigma_b \sim \textrm{N}\left( 0, \Sigma_b= \begin{pmatrix} \sigma_{b_0}^2 & \sigma_{b_{01}}\\ \sigma_{b_{01}} & \sigma_{b_1}^2\end{pmatrix}\right)\] We can assume some relationship between the longitudinal and a survival model based on some or all components of \(\eta_i(\cdot)\), which could be time dependent.

Let’s consider an event risk that depends on some component from the longitudinal model: \[\lambda_i(t)=\lambda_0(t)\exp\left(h(\eta_i(t))\right)\] where \(h(\eta_i(t))\) is a function of the parameters of the longitudinal model. Several association structures have been proposed in the literature (J.-L. Wang & Zhong (2024)), each one having a specific interpretation and purpose. The following table gives an overview of the available options for INLAjoint, each one presented in the next subsections.

| Association | Shared Component \(h(\eta_i(t))\) | Research Question | Time Dependency | Section |

|---|---|---|---|---|

| Shared Random Effects (SRE) | \(\gamma^T b_i\) | How does a subject’s individual deviation from population average of each random effect affect the risk of event? | No | 4.3.1 |

| Time-Dependent SRE | \(\gamma(b_{i0} + b_{i1} \cdot t)\) | How does a subject’s evolving deviation from the population average trajectory at time \(t\) affect the risk of event? | Yes | 4.3.2 |

| Current Value (CV) | \(\gamma \cdot \eta_i(t)\) | How does the “true” current value of the biomarker at time \(t\) affect the risk of event? | Yes | 4.3.3 |

| Current Slope (CS) | \(\gamma \cdot \frac{d\eta_i(t)}{dt}\) | How does the current rate of change (trend) of the biomarker at time \(t\) affect the risk of event? | Yes | 4.3.4 |

| Cumulative Value (AUC) | \(\gamma \cdot \int_a^b \eta_i(s) ds\) | How does the cumulative exposure of the biomarker in a specific time window affect the risk of event? | Yes | 4.3.6 |

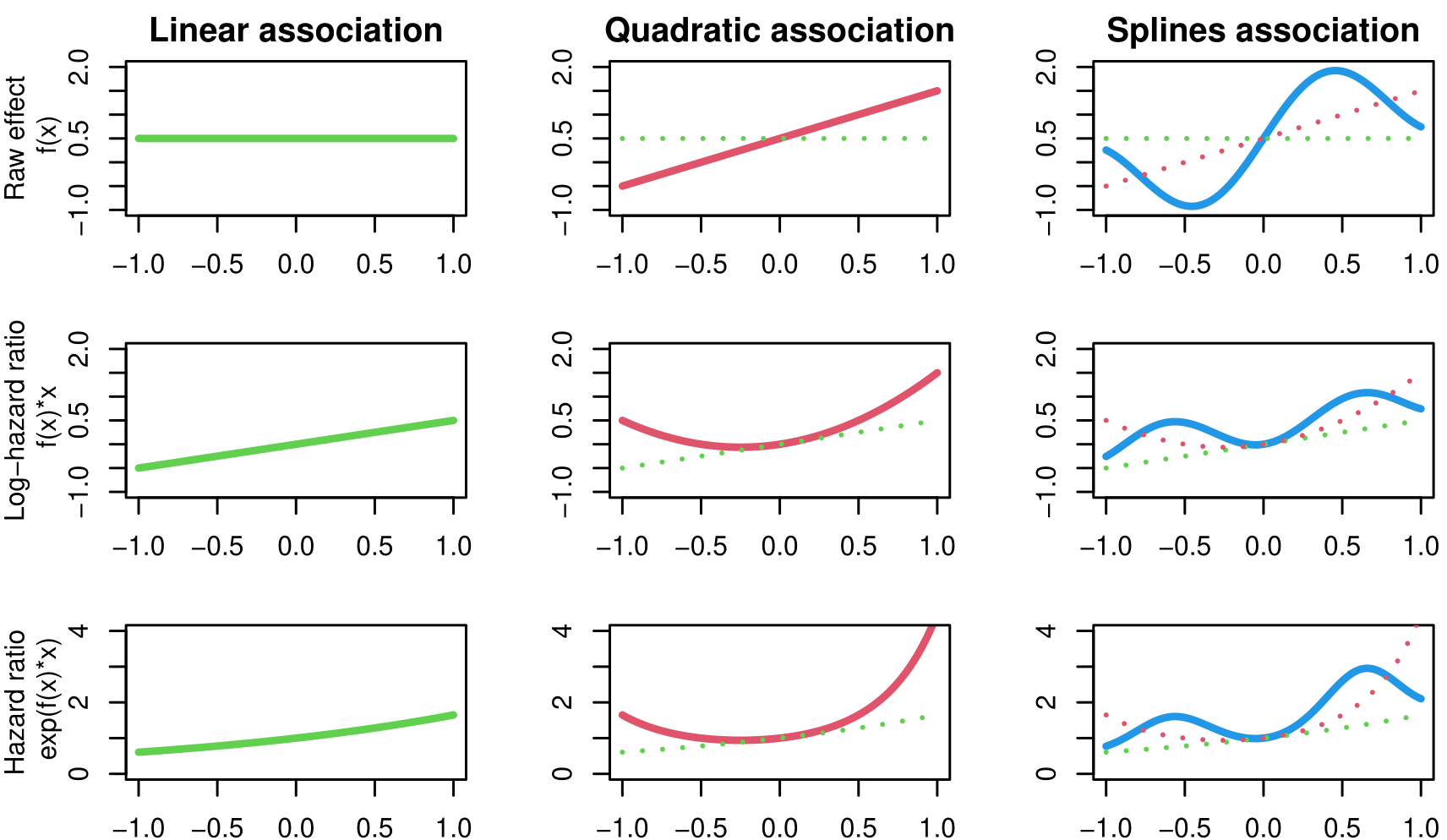

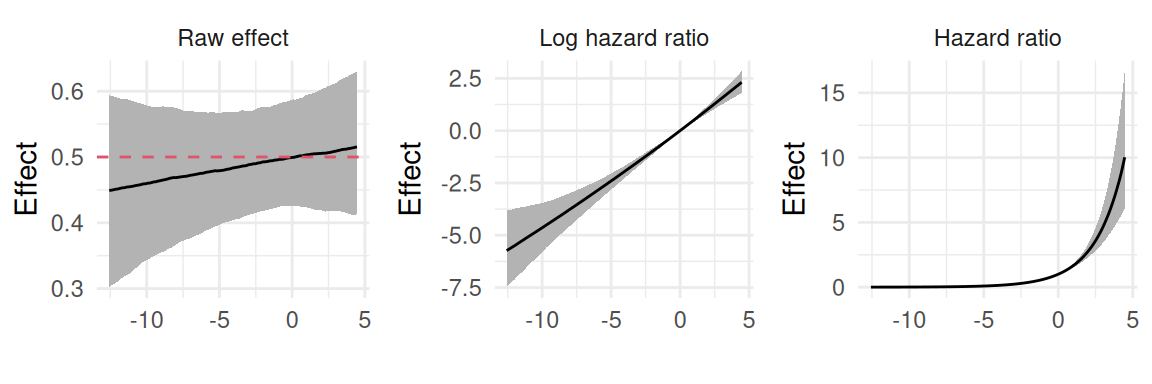

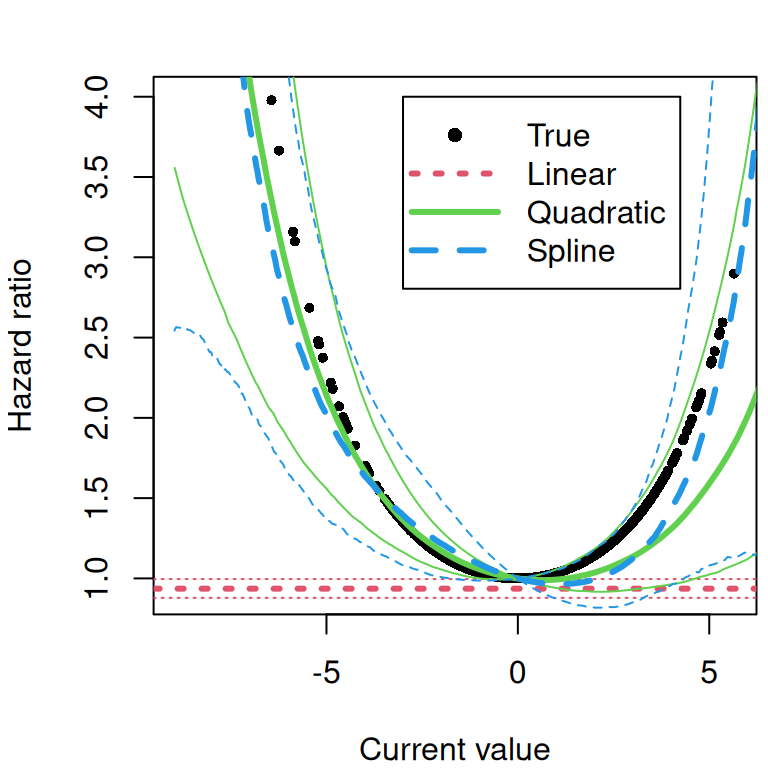

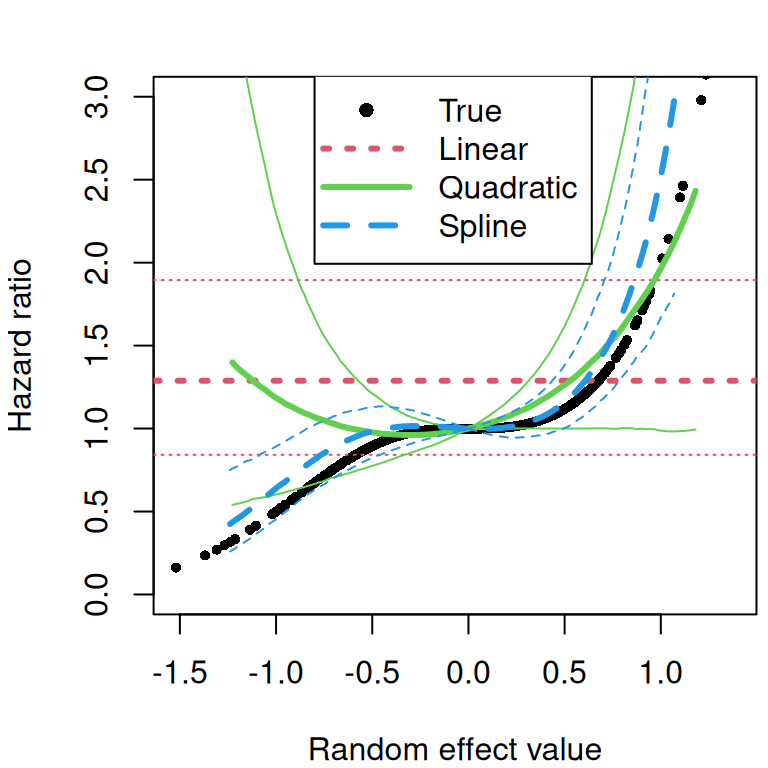

| Non-Linear Association | \(f(\eta_i(t))\) | Identify and describe non-linear relationship between a biomarker and an event risk (e.g. U shaped association where extreme values are associated to an increased risk) | Yes | 4.3.7 |

4.3.1 Shared random effects association structure

The shared random effects association structure provides a straightforward yet effective way to link the longitudinal and survival data. The purpose of this association structure is to account for the relationship between the longitudinal measurements and the time-to-event data by sharing the random effects. The model assume that individuals with similar deviations from the average longitudinal profile may also have similar survival outcomes. This structure is notably simple because it does not involve time-dependent components in the survival submodel. Instead, it relies on the random effects from the longitudinal model, which are assumed to capture the underlying individual-specific characteristics that influence both the longitudinal and survival processes. This simplicity makes the shared random effects association structure an attractive option to avoid the complexity of time-dependent covariates in the survival model.

Beyond this computational aspect, sharing each random effect separately allows to identify key characteristics that link the biomarker and the risk of event (e.g., is the individual deviation from the average baseline value of the biomarker associated to the risk of event? Same question for the rate of change instead of baseline value, etc.). We can write the model as follows, based on the random intercept-slope example:

\[\begin{equation*} \left\{ \begin{array}{lc} \textrm{E}[Y_{i}(t)]= \beta_0 + b_{i0} + (\beta_1 + b_{i1})\cdot t + \beta_2\cdot trt_i + \beta_3\cdot trt_i\cdot t \\ \lambda_i(t)=\lambda_0(t)\exp\left(\gamma_0\cdot b_{i0} + \gamma_1\cdot b_{i1}\right) \end{array} \right. \end{equation*}\]

The fixed effects \(\gamma\), are used to scale the random effects in the survival submodel (of note, they are treated as hyperparameters in the INLA methodology). The random intercept is scaled by \(\gamma_0\) while the random slope is scaled by \(\gamma_1\). For simplicity, we only assume treatment effect in the longitudinal submodel but in the following we show that this treatment effect can be shared through the association.

The simulation of data from a joint model with a shared random effects structure is done in two steps. First, we generate the longitudinal data, and then we use the latent variables from that process to generate the survival data:

Step 1: As described in Chapter 3, we first generate a set of correlated random effects for each individual. These are used to construct the individual-specific longitudinal trajectory, and residual error is added to produce the observed longitudinal outcomes.

Step 2: The key feature of this joint model is that the hazard function for the survival outcome incorporates the same random effects that drive the longitudinal process.

To simulate an event time, we use the principle of inverse transform sampling as detailed in Chapter 2. For a simple exponential baseline where \(\lambda_0(t)=\mu\), the survival function is: \[S(t)=\exp(-\mu t \exp(\gamma_0 \cdot b_{i0} + \gamma_1 \cdot b_{i1}))\] Setting a uniform random variable \(u \sim U(0, 1)\) equal to \(S(t)\) and solving for the event time t gives: \[t = \frac{-\log(u)}{\mu\exp(\gamma_0 \cdot b_{i0} + \gamma_1 \cdot b_{i1})}\]

We have created two datasets, datJM_L contains longitudinal data, where each individual has one or multiple recorded values of the longitudinal outcome Y:

head(datJM_L, 12) id time Y trt

1 1 0 1.8649148 1

2 1 1 1.8238544 1

7 2 0 -0.1964851 0

8 2 1 -2.1884524 0

9 2 2 -4.4269076 0

10 2 3 -6.1963592 0

11 2 4 -8.1028639 0

13 3 0 2.2953455 1

14 3 1 1.6459310 1

15 3 2 2.4174909 1

16 3 3 2.1877175 1

17 3 4 0.9962945 1The second dataset datJM_S contains one line per individual and gives the time of the event or censoring.

head(datJM_S, 5) id eventTimes eventIndic trt

1 1 1.240749 1 1

2 2 5.000000 0 0

3 3 5.000000 0 1

4 4 5.000000 0 1

5 5 5.000000 0 1We can see that when an individual experiences the event, it does not produce longitudinal measurements anymore, meaning that the event is censoring the longitudinal process. Joint models allow us to tackle this known source of informative censoring to enhance the analysis of the longitudinal marker. Additionally, since the survival process depends on the shared random effects, it means that individual characteristics that can be captured in the longitudinal model informs the risk of event and therefore joint modeling can enhance the analysis of event times by explaining some individual level variability in the risk.

Based on this data and the code presented in Chapter 2 and Chapter 3, we could fit a mixed-effects model for the longitudinal outcome:

library(INLA)

library(INLAjoint)

M_L <- joint(formLong = Y ~ time * trt + (1 + time | id),

dataLong=datJM_L, id="id", timeVar="time")and similarly we could fit a proportional hazards regression model for survival times:

M_S <- joint(formSurv = inla.surv(eventTimes, eventIndic) ~ -1,

dataSurv = datJM_S)However, we are interested in the joint modeling of these two outcomes and therefore we must combine these two models. This can be done directly with the joint() function with the following syntax:

M_jm <- joint(formSurv = inla.surv(eventTimes, eventIndic) ~ -1,

formLong = Y ~ time * trt + (1 + time | id),

dataSurv = datJM_S, dataLong=datJM_L,

id="id", timeVar="time", assoc="SRE_ind")We can clearly see how the univariate models are combined here, since we included the formula for the survival outcome formSurv and the corresponding data dataSurv as well as the formula for the longitudinal submodel formLong and the corresponding data dataLong. The id and timeVar arguments are indicating the columns to identify the grouping (i.e. individuals in this case) and the name of the column for time. The assoc argument is a character string that specifies the association between the longitudinal and survival components. There are multiple options available and described throughout this chapter. Here we set the association of interest: SRE_ind which stands for “shared random effects independently”, since each random effect is shared and scaled in the survival submodel.

In Section 2.8 and Section 3.4, we introduced the specific data format required to fit multiple survival and multiple longitudinal outcomes with R-INLA, respectively. Here for simplicity we do not show the estimation with R-INLA and focus on the user-friendly interface INLAjoint, but we can have a look at the data structure in the fitted object:

str(M_jm$.args$data)List of 22

$ Intercept_L1 : num [1:7884] 1 1 1 1 1 1 1 1 1 1 ...

$ time_L1 : num [1:7884] 0 1 0 1 2 3 4 0 1 2 ...

$ trt_L1 : num [1:7884] 1 1 0 0 0 0 0 1 1 1 ...

$ time.X.trt_L1 : num [1:7884] 0 1 0 0 0 0 0 0 1 2 ...

$ IDIntercept_L1 : num [1:7884] 1 1 2 2 2 2 2 3 3 3 ...

$ IDtime_L1 : num [1:7884] 501 501 502 502 502 502 502 503 503 503 ...

$ WIntercept_L1 : num [1:7884] 1 1 1 1 1 1 1 1 1 1 ...

$ Wtime_L1 : num [1:7884] 0 1 0 1 2 3 4 0 1 2 ...

$ Y_L1 : num [1:7884] 1.865 1.824 -0.196 -2.188 -4.427 ...

$ y1..coxph : num [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ E..coxph : num [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ expand1..coxph : int [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ baseline1.hazard : num [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ baseline1.hazard.idx : num [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ baseline1.hazard.time : num [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ baseline1.hazard.length: num [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ Intercept_S1 : num [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ SRE_Intercept_L1_S1 : num [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ SRE_time_L1_S1 : num [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ id : int [1:7884] NA NA NA NA NA NA NA NA NA NA ...

$ baseline1.hazard.values: num [1:16] 0 0.333 0.667 1 1.333 ...

$ Yjoint :List of 2

..$ Y_L1 : num [1:7884] 1.865 1.824 -0.196 -2.188 -4.427 ...

..$ y1..coxph: num [1:7884] NA NA NA NA NA NA NA NA NA NA ...We can see that similar to the structure introduced before, the data size is increased to combine in long-vector format the data contribution to each likelihood, where the first part of the vector is for the longitudinal submodel while the rest is for survival. The outcome is a list with first component the longitudinal outcome and second component the survival outcome. Let’s for example have a look at the data for the first individual:

id1 <- which(M_jm$.args$data$IDIntercept_L1==1 |

M_jm$.args$data$expand1..coxph==1)

length(id1)[1] 6There are 6 lines in the INLA-format data that corresponds to the first individual (while the original data contains 2 repeated measurements of the longitudinal marker and one event time). First we can look at the longitudinal components:

sapply(M_jm$.args$data[c(1:6,8,9)], function(x) round(x[id1], 2)) Intercept_L1 time_L1 trt_L1 time.X.trt_L1 IDIntercept_L1 IDtime_L1 Wtime_L1 Y_L1

[1,] 1 0 1 0 1 501 0 1.86

[2,] 1 1 1 1 1 501 1 1.82

[3,] NA NA NA NA NA NA NA NA

[4,] NA NA NA NA NA NA NA NA

[5,] NA NA NA NA NA NA NA NA

[6,] NA NA NA NA NA NA NA NAWe can see the first 2 lines corresponds to the longitudinal submodel, where the random intercept id number is 1 and the random slope id number is 501 since correlated random effects must have an unique id and the sample size includes 500 individuals (as illustrated in Section 3.2.4). The last column shows the outcome values for these two measurement occasions. Now we can look at the components of the survival part:

sapply(M_jm$.args$data[c(11,13,18,19,10)], function(x) round(x[id1], 2)) E..coxph baseline1.hazard SRE_Intercept_L1_S1 SRE_time_L1_S1 y1..coxph

[1,] NA NA NA NA NA

[2,] NA NA NA NA NA

[3,] 0.33 0.00 1 501 0

[4,] 0.33 0.33 1 501 0

[5,] 0.33 0.67 1 501 0

[6,] 0.24 1.00 1 501 1We can see how the remaining 4 lines are associated to the decomposition of the follow-up of the survival time (to capture the evolution of the baseline hazard during the follow-up), as introduced in Section 2.2.3. The columns SRE_Intercept_L1_S1 and SRE_time_L1_S1 corresponds to each random effect shared in the survival model, where the ids are matched with values from columns IDIntercept_L1 and IDtime_L1 from the longitudinal part. The names explicitly informs about the role of these columns, SRE for shared random effects, Intercept/time for which random effect is shared, L1_S1 to indicate that the first longitudinal is sharing in the first survival (useful in case of multiple longitudinal and/or survival as illustrated later in this chapter).

We can also have a look at the formula, where we need to introduce the shared and scaled random effects. The random effects are defined with the iidkd parametrization introduced in Section 3.2.4:

cat(attr(terms(M_jm$.args$formula), which = "term.labels")[7])f(IDIntercept_L1, WIntercept_L1, model = "iidkd", order = 2, n = 1000, constr = F, hyper = list(theta1 = list(param = c(10, 1, 1, 0))))cat(attr(terms(M_jm$.args$formula), which = "term.labels")[8])f(IDtime_L1, Wtime_L1, copy = "IDIntercept_L1")In order to share and scale them, another f() is defined, which creates a copy of each random effect:

cat(attr(terms(M_jm$.args$formula), which = "term.labels")[9])f(SRE_Intercept_L1_S1, copy = "IDIntercept_L1", hyper = list(beta = list(fixed = FALSE, param = c(0, 1), initial = 0.1)))cat(attr(terms(M_jm$.args$formula), which = "term.labels")[10])f(SRE_time_L1_S1, copy = "IDtime_L1", hyper = list(beta = list(fixed = FALSE, param = c(0, 1), initial = 0.1)))The argument fixed=FALSE means that the random effect is scaled in the shared likelihood while setting this argument to TRUE would only share the random effect without scaling parameters \(\gamma\). The prior for the scaling parameters is Gaussian with mean 0 and standard deviation 1 (it is important for numerical stability to keep a reasonable range of values for the prior on this parameter since extreme values associated to extreme values of the longitudinal part can create numerical issues).

We can print the summary of the model (with the argument sdcor=TRUE to display standard deviations and correlations instead of the default variances and covariances):

summary(M_jm, sdcor=TRUE)Longitudinal outcome (gaussian)

mean sd 0.025quant 0.5quant 0.975quant

Intercept_L1 0.9724 0.0638 0.8473 0.9724 1.0976

time_L1 -0.9918 0.0494 -1.0883 -0.9920 -0.8943

trt_L1 1.0817 0.0897 0.9057 1.0817 1.2576

time:trt_L1 0.5057 0.0673 0.3735 0.5057 0.6377

Res. err. (sd) 0.5142 0.0109 0.4932 0.5140 0.5360

Random effects standard deviation / correlation (L1)

mean sd 0.025quant 0.5quant 0.975quant

Intercept_L1 0.9799 0.0366 0.9110 0.9785 1.0559

time_L1 0.7028 0.0275 0.6487 0.7028 0.7578

Intercept_L1:time_L1 0.6174 0.0422 0.5258 0.6187 0.6940

Survival outcome

mean sd 0.025quant 0.5quant 0.975quant

Baseline risk (variance)_S1 0.1015 0.0485 0.0323 0.0935 0.2191

Association longitudinal - survival

mean sd 0.025quant 0.5quant 0.975quant

SRE_Intercept_L1_S1 0.5323 0.1526 0.2232 0.5353 0.8238

SRE_time_L1_S1 0.2683 0.2258 -0.1601 0.2630 0.7286

log marginal-likelihood (integration) log marginal-likelihood (Gaussian)

-3824.664 -3820.221

Deviance Information Criterion: 5859.773

Widely applicable Bayesian information criterion: 5854.167

Computation time: 4.12 secondsThe summary first shows summary statistics over the posterior marginals of the fixed effects and the residual error of the longitudinal submodel, followed by the random effects and the parameters related to survival outcomes (here for simplicity, we did not include any effect in the survival submodel other than the effects coming from the longitudinal submodel but their inclusion is straightforward, as shown in Chapter 2).

Finally, the summary shows the posterior marginals for the association parameters, that scale each random effect. These parameters, SRE_Intercept_L1_S1 and SRE_time_L1_S1, quantify the link between the unobserved, subject-specific characteristics that govern the longitudinal trajectory and the hazard of the event. Their effect is on the log scale (i.e., log-hazard ratios) but we can convert to hazard ratios with the argument hr=TRUE in the call of the summary:

round(summary(M_jm, hr=TRUE)$AssocLS,2) exp(mean) sd 0.025quant 0.5quant 0.975quant

SRE_Intercept_L1_S1 1.72 0.26 1.26 1.71 2.27

SRE_time_L1_S1 1.34 0.31 0.86 1.30 2.05The interpretation is similar to standard hazard ratios, for example an individual with a 1 unit higher value of the longitudinal marker compared to population average at baseline, is associated to a risk increased by 72% [26% ; 127%] of the event at any given time, all else being equal. Since both the association parameters are positive, it suggests that both a higher baseline level and a more rapid increase in the longitudinal marker compared to population average, are associated with a higher risk of event.

The baseline hazard we simulated is constant and equal to 0.1 (baseScale), we can see that it is properly captured for each interval of the follow-up:

summary(M_jm)$Baseline[[1]] time lower median upper

[1,] 0.0000000 0.08183166 0.10589999 0.1504024

[2,] 0.3333333 0.08169697 0.10376657 0.1418301

[3,] 0.6666667 0.07701453 0.09695350 0.1238616

[4,] 1.0000000 0.07325239 0.09362257 0.1175912

[5,] 1.3333333 0.07025076 0.09122904 0.1137004

[6,] 1.6666667 0.07219124 0.09172364 0.1159912

[7,] 2.0000000 0.06602948 0.08784485 0.1092652

[8,] 2.3333333 0.06167272 0.08547907 0.1066681

[9,] 2.6666667 0.06455207 0.08639074 0.1079944

[10,] 3.0000000 0.06519347 0.08667094 0.1087888

[11,] 3.3333333 0.06501699 0.08667849 0.1092238

[12,] 3.6666667 0.06607493 0.08751891 0.1113219

[13,] 4.0000000 0.06442162 0.08705330 0.1113025

[14,] 4.3333333 0.06639394 0.08903937 0.1172154

[15,] 4.6666667 0.06574964 0.09009131 0.1240071

[16,] 5.0000000 0.06192667 0.09007970 0.1325942We can also plot the posterior marginals of all the model parameters and hyperparameters with the plot() function:

library(ggplot2)

library(ggpubr)

Plots_jm <- plot(M_jm)

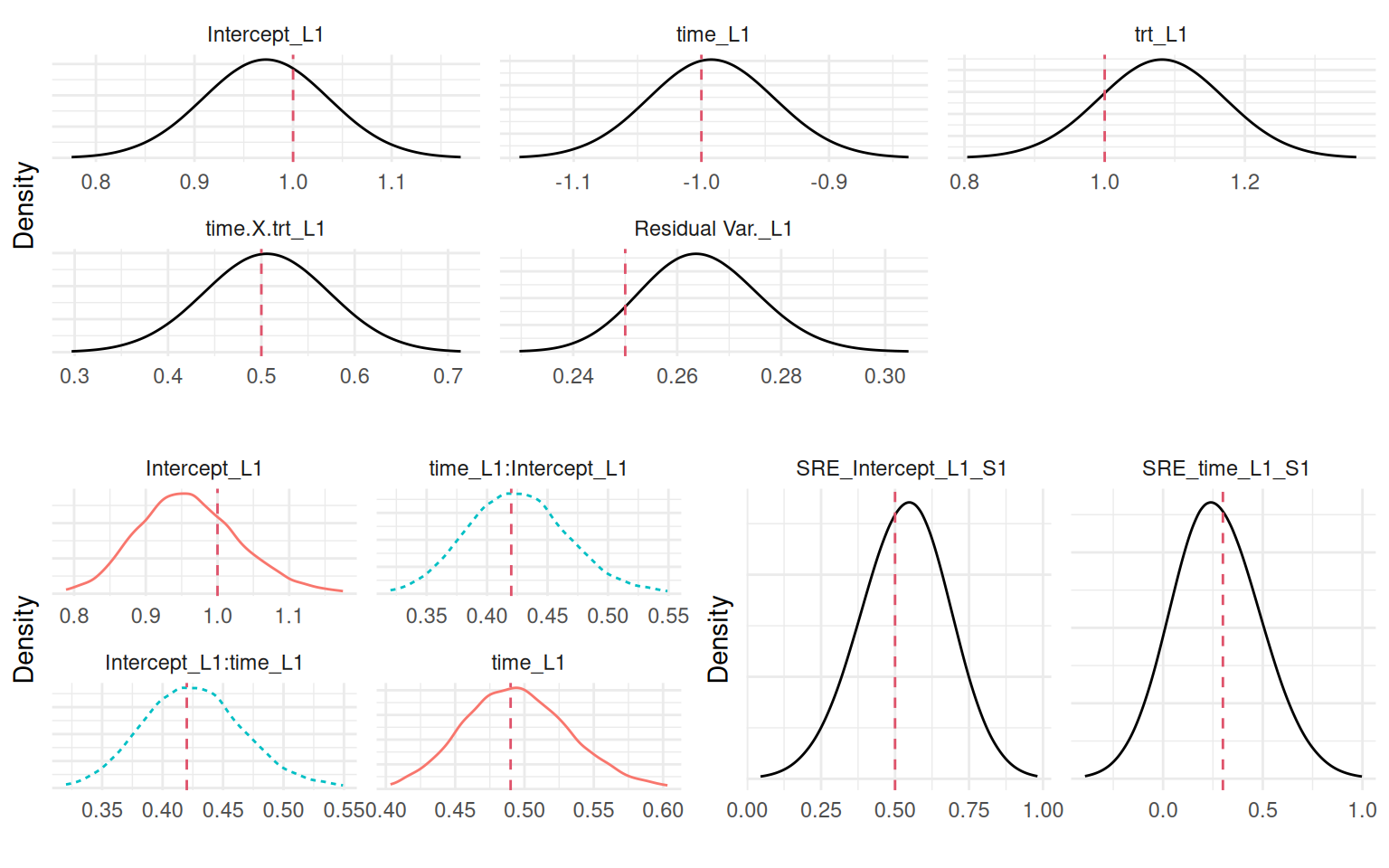

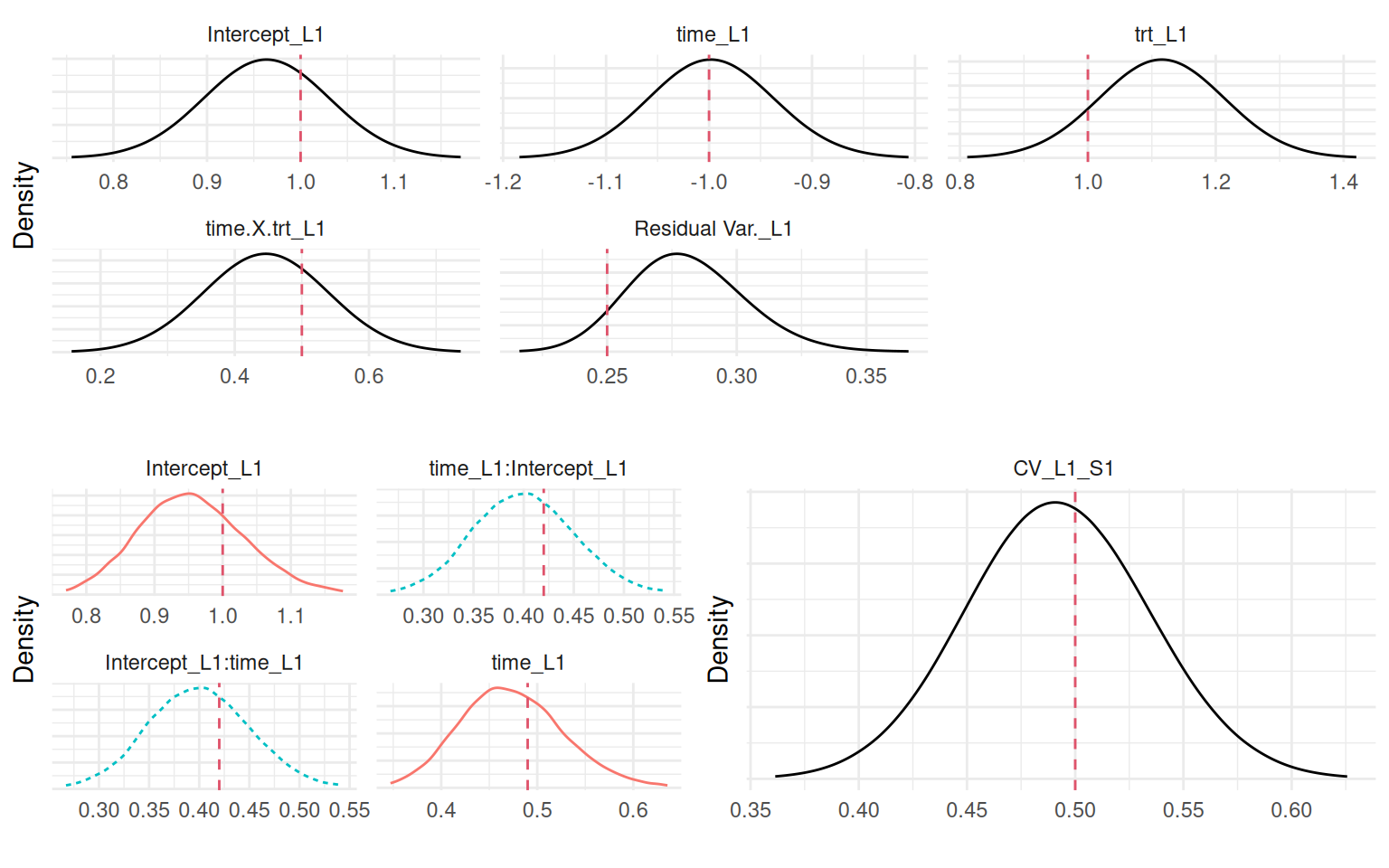

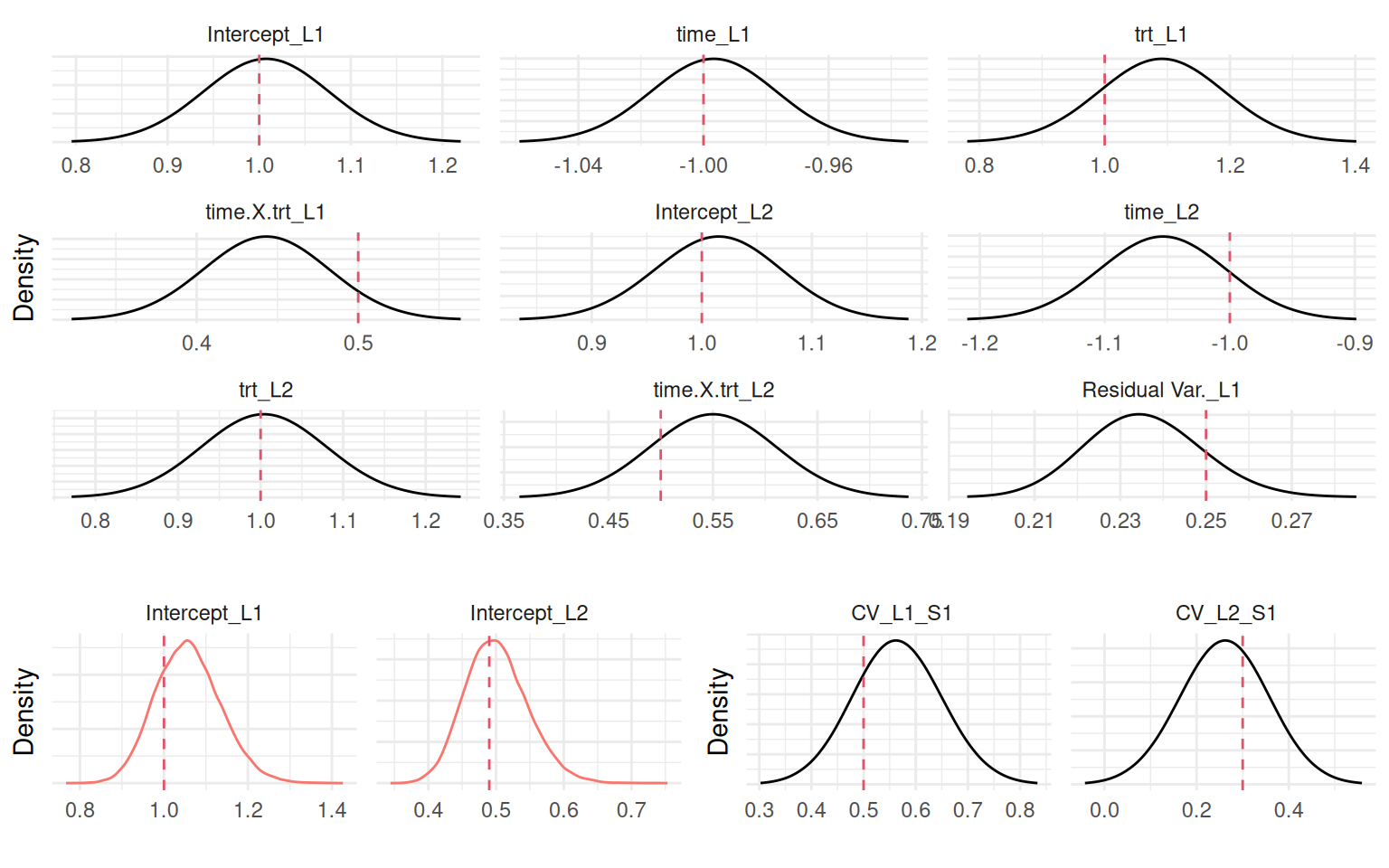

names(Plots_jm)[1] "Outcomes" "Covariances" "Associations" "Baseline" The plot() function returns a series of plots, where Outcomes contains posterior marginals for all the fixed effects and some family parameters such as residual error terms. Covariances gives the variances and covariances (or standard deviations and correlations with sdcor=TRUE) of the random effects. Associations gives the posterior marginals of all the parameters that quantifies the association between models and Baseline corresponds to plots related to baseline hazards.

Figure 4.1 shows all these plots:

# Save plots syntax to reuse throughout the chapter

P1 <- "Plots_jm$Outcomes$L1 + theme_minimal() +

theme(axis.text.y=element_blank(), axis.ticks.y=element_blank())"

P2 <- "Plots_jm$Covariances$L1 + theme_minimal() +

theme(axis.text.y=element_blank(), axis.ticks.y=element_blank(),

legend.position='none')"

P3 <- "Plots_jm$Associations + theme_minimal() +

theme(axis.text.y=element_blank(), axis.ticks.y=element_blank())"

ggarrange(eval(parse(text=P1)),

ggarrange(eval(parse(text=P2)),

eval(parse(text=P3)), ncol=2), nrow=2)

Figure 4.1 shows that the model recovered all the true parameter values. The most important ones being the association parameters since they correspond to the scaling parameters that link both submodels. As introduced in Section 1.5.3, we can see the summary of each individual random effect’s posterior distribution in the argument summary.random of the fitted object. We can compare the true values with estimates (Figure 4.2):

par(mfrow=c(1,2), mar = c(4,4,2,0.3), cex=0.8)

plot(MVnorm[,1], M_jm$summary.random$IDIntercept_L1$mean[1:n],

xlab="True", ylab="Estimated", main="Random intercept")

plot(MVnorm[,2], M_jm$summary.random$IDtime_L1$mean[-(1:n)],

xlab="True", ylab="Estimated", main="Random slope")

As we can see, the model did recover the individual random effects properly. This model can be used to predict the longitudinal and survival outcomes for new individuals. Let’s consider two individuals with random effects values defined as quantiles of the distribution of random effects (we consider 10% and 90% quantiles for the two individuals, so they have very different individual profiles):

SD_m <- summary(M_jm, sdcor=TRUE)$ReffList[[1]][,"mean"][1:2] # sd

SD_0 <- qnorm(p = 0.1, sd = SD_m[1]) # quantile 0.1 random intercept

SD_1 <- qnorm(p = 0.1, sd = SD_m[2]) # quantile 0.1 random slope

Y_id1 <- rnorm(6, b_0 + SD_0 + (b_1 + SD_1)*mestime, b_e) # observed id 1

Y_id2 <- rnorm(6, b_0 - SD_0 + (b_1 - SD_1)*mestime, b_e) # observed id 2

NewData <- data.frame(id=rep(1:2, each=length(mestime)),

time=mestime, Y=c(Y_id1, Y_id2), trt=0);NewData id time Y trt

1 1 0 0.3421689 0

2 1 1 -1.7594623 0

3 1 2 -4.2267953 0

4 1 3 -5.3135691 0

5 1 4 -7.9560552 0

6 1 5 -10.5819121 0

7 2 0 1.9690854 0

8 2 1 3.2042589 0

9 2 2 2.3057939 0

10 2 3 1.5305130 0

11 2 4 2.0125609 0

12 2 5 0.7823775 0Now we can predict for these two individuals their individual longitudinal trajectory as well as their survival probabilities (see Section 1.5.5 for an introduction to survival curves with INLAjoint):

P <- predict(M_jm, NewData, horizon=10, survival=TRUE)

str(P)List of 2

$ PredL:'data.frame': 102 obs. of 8 variables:

..$ id : Factor w/ 2 levels "1","2": 1 1 1 1 1 1 1 1 1 1 ...

..$ time : num [1:102] 0 0.204 0.408 0.612 0.816 ...

..$ Outcome : chr [1:102] "Y" "Y" "Y" "Y" ...

..$ Mean : num [1:102] 0.242 -0.181 -0.603 -1.026 -1.448 ...

..$ Sd : num [1:102] 0.329 0.315 0.303 0.293 0.284 ...

..$ quant0.025: num [1:102] -0.393 -0.788 -1.19 -1.596 -2.005 ...

..$ quant0.5 : num [1:102] 0.243 -0.18 -0.602 -1.025 -1.447 ...

..$ quant0.975: num [1:102] 0.89055 0.44006 -0.00585 -0.45421 -0.89437 ...

$ PredS:'data.frame': 102 obs. of 13 variables:

..$ id : Factor w/ 2 levels "1","2": 1 1 1 1 1 1 1 1 1 1 ...

..$ time : num [1:102] 0 0.204 0.408 0.612 0.816 ...

..$ Outcome : chr [1:102] "S_1" "S_1" "S_1" "S_1" ...

..$ Haz_Mean : num [1:102] 0 0 0 0 0 0 0 0 0 0 ...

..$ Haz_Sd : num [1:102] 0 0 0 0 0 0 0 0 0 0 ...

..$ Haz_quant0.025 : num [1:102] 0 0 0 0 0 0 0 0 0 0 ...

..$ Haz_quant0.5 : num [1:102] 0 0 0 0 0 0 0 0 0 0 ...

..$ Haz_quant0.975 : num [1:102] 0 0 0 0 0 0 0 0 0 0 ...

..$ Surv_Mean : num [1:102] 1 1 1 1 1 1 1 1 1 1 ...

..$ Surv_Sd : num [1:102] 1 1 1 1 1 1 1 1 1 1 ...

..$ Surv_quant0.025: num [1:102] 1 1 1 1 1 1 1 1 1 1 ...

..$ Surv_quant0.5 : num [1:102] 1 1 1 1 1 1 1 1 1 1 ...

..$ Surv_quant0.975: num [1:102] 1 1 1 1 1 1 1 1 1 1 ...Here the object P contains a list with the predictions for the longitudinal outcome PredL and the predictions for the survival outcome PredS. Both have been introduced in Section 1.5.5, and thus we do not give additional details here.

An important point to note here is that we predict the processes at future times, where we have not observed any data (all data is observed within 5 units of time and we predict at 10). In most situations, these predictions are tricky since we did not observe data and thus have to rely on the model predictions that could be far from reality. With our random walk baseline, the baseline hazard is based on the data and therefore it is not straightforward to predict in areas where no data have been observed., In this context, INLAjoint assume linear baseline hazard beyond the last observed data point. In this specific example, this choice is fine since we did simulate with constant baseline but in other situations, it may not be appropriate and predictions should be done in areas where the model did learn from the data or other baseline hazard options should be considered, such as parametric options or other proper spline models.

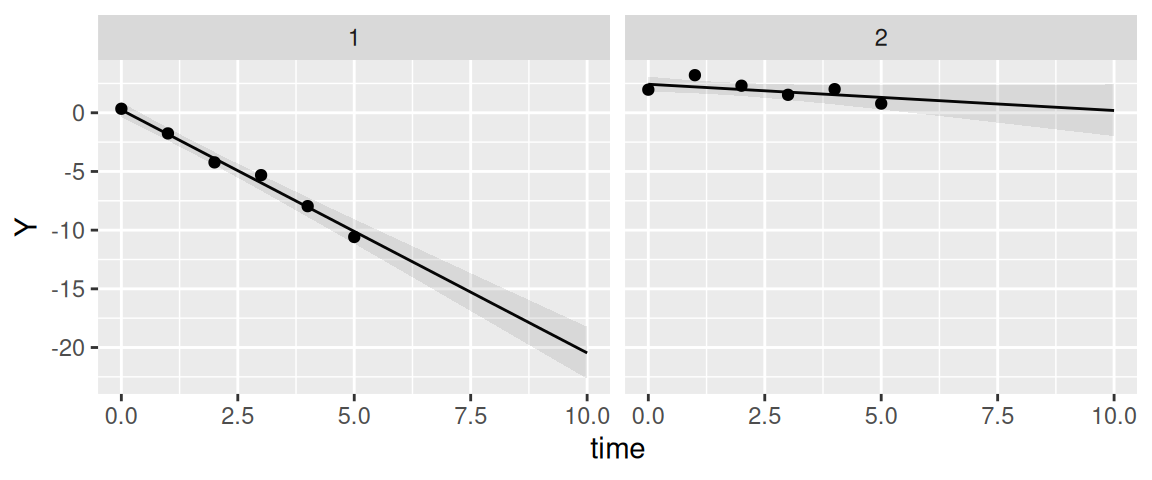

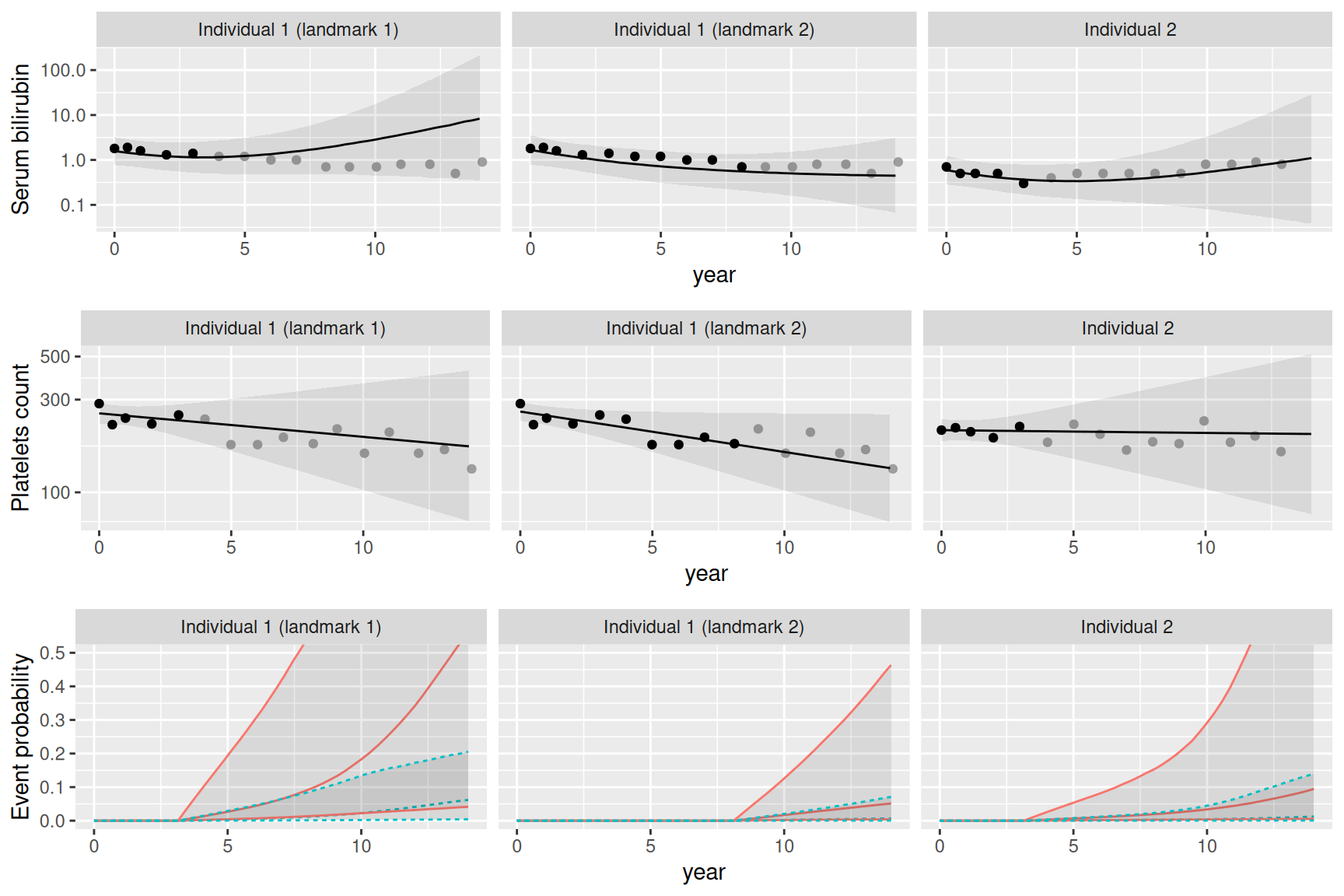

We can plot the estimated longitudinal trajectories, where the observed data points are displayed as black dots:

ggplot(P$PredL, aes(x = time, y = quant0.5)) + geom_line() +

geom_ribbon(aes(ymin=quant0.025, ymax=quant0.975), alpha=0.1) +

geom_point(data=NewData, aes(x=time, y=Y)) + ylab("Y") +

theme(legend.position="none") + facet_wrap(~id)

The corresponding survival probability over time is shown in Figure 4.4:

tps <- seq(5,10,len=1e3)

datTrue <- data.frame(id=rep(1:2, each=1e3), time=rep(tps,2), trt=0)

ggplot(P$PredS, aes(x = time, y = Surv_quant0.5)) + geom_line() +

geom_ribbon(aes(ymin=Surv_quant0.025, ymax=Surv_quant0.975), alpha=0.1) +

theme(legend.position="none") + ylab("Survival probability") +

facet_wrap(~id)

We can see that INLAjoint automatically assumes the individual was not at risk for the event until the last longitudinal measurement. This is a common assumption since in most cases the event can be recorded when assessing longitudinal measurement, and therefore when the individual produces longitudinal measurements it also means the individual is event-free at this time. In some situations, we may consider the individual is at risk at a specific time, which can be defined with the argument Csurv. For example we can consider our 2 individuals were at risk since time 0:

P <- predict(M_jm, NewData, horizon=10, survival=TRUE, Csurv=0)

tps <- seq(5,10,len=1e3)

ggplot(P$PredS, aes(x = time, y = Surv_quant0.5)) + geom_line() +

geom_ribbon(aes(ymin=Surv_quant0.025, ymax=Surv_quant0.975), alpha=0.1) +

theme(legend.position="none") + facet_wrap(~id)

4.3.2 Time-dependent individual deviation from the average longitudinal trajectory

Building upon the shared random effects association structure, a more sophisticated approach involves incorporating a time-dependent component into the survival submodel. In this association structure, the survival model includes a linear combination of the random effects from the longitudinal model. This accounts for the dynamic nature of the relationship between the longitudinal measurements and the survival outcomes, where the event risk depends on the individual deviation from the population average of the longitudinal marker trajectory.

The purpose of this time-dependent association structure is to capture the relationship between the evolving longitudinal and survival processes. By allowing the shared component to change over time, the model can better reflect the reality that an individual’s risk of an event may vary as their longitudinal trajectory changes. While this structure introduces additional complexity compared to the previous shared random effects association, it can lead to more accurate predictions and inference. The model is defined as follows: \[\begin{equation*}

\left\{ \begin{array}{lc}

\textrm{E}[Y_{i}(t)]= \beta_0 + b_{i0} + (\beta_1 + b_{i1})\cdot t + \beta_2\cdot trt_i + \beta_3\cdot trt_i\cdot t \\

\lambda_i(t)=\lambda_0(t)\exp\left(\gamma\cdot \left(b_{i0} + b_{i1}\cdot t\right)\right)

\end{array}

\right.

\end{equation*}\] Simulating an event time becomes more complex when the hazard function itself includes a time-dependent component. Unlike the shared random effects model in the previous section where the term in the exponent was constant over time for a given individual, here it changes linearly with time \(t\). This means the hazard rate is not constant, even with an exponential baseline. To generate an event time using the inverse transform sampling method, we must first compute the cumulative hazard function \(\Lambda_i(t)=\int_0^t \lambda_i(s)\mathrm{d}s\), needed for the computation of survival. When the linear predictor includes time-dependent components, there is often no closed-form analytical solution for the integral over the risk. This challenge requires the use of specialized numerical algorithms to generate event times from a complex, time-varying hazard function. These methods typically work by discretizing the follow-up time into a fine grid of small intervals. Within each interval, the time-dependent covariate (and thus the hazard) can be treated as approximately constant, allowing for the step-wise calculation of the cumulative hazard. An event time is then generated based on this numerically approximated survival function. The R package PermAlgo uses a permutation algorithm to generate event times conditional on time-dependent covariates by performing a one-to-one matching of subjects to a set of pre-generated event times based on a probability law derived from the Cox partial likelihood. Of note, permAlgo cannot provide the true baseline hazard because its core permutation algorithm is specifically designed to generate survival data without ever needing to specify or compute a baseline hazard function.

gap2 <- 0.01 # used to generate a lot of time points for PermAlgo

mestime2 <- seq(0,fw,gap2) # measurement times

time2 <- rep(mestime2, n) # time column

n_i2 <- length(mestime2)

trt2 <- rep(trt, each=n_i2)

b12 <- rep(MVnorm[,1], each=n_i2)

b22 <- rep(MVnorm[,2], each=n_i2)

SRE_i <- b12 + b22*time2 # linear combination of random effects to share

# Permutation algorithm to generate survival times that depends on SRE_i

library(PermAlgo)

DatTmp <- permalgorithm(n, n_i2, Xmat=matrix(SRE_i, ncol=1), betas=s_1)

DatTmp2 <- DatTmp[which(!duplicated(DatTmp$Id, fromLast = T)),

c("Id","Event","Stop")]

datJM2_S <- data.frame(id = 1:n, eventTimes = mestime2[DatTmp2$Stop+1],

eventIndic = DatTmp2$Event, trt = trt)

datJM2_L <- datJM_Lt[-unlist(sapply(1:n, function(x)

which(datJM_Lt$time>=datJM2_S$eventTimes[datJM2_S$id==x] &

datJM_Lt$id==x))),]Now we have our two datasets with the desired structure. We can fit the joint model, the call of the joint() function is the same as the previous model except for the association parameter assoc="SRE" which stands for “shared random effects” (i.e., sharing the individual deviation from the mean at time t as defined by the random effects).

M_jmSRE <- joint(formSurv = inla.surv(eventTimes, eventIndic) ~ -1,

formLong = Y ~ time * trt + (1 + time | id),

dataSurv = datJM2_S, dataLong=datJM2_L,

id="id", timeVar="time", assoc="SRE")The data structure is different from the previous model because now we need to account for the time-dependent association. With R-INLA, this is done by including an additional layer in the data to compute the linear combination of parameters to share.

str(M_jmSRE$.args$data)List of 24

$ Intercept_L1 : num [1:6878] 1 1 1 1 1 1 1 1 1 1 ...

$ time_L1 : num [1:6878] 0 1 0 1 0 1 0 1 2 0 ...

$ trt_L1 : num [1:6878] 1 1 0 0 1 1 1 1 1 1 ...

$ time.X.trt_L1 : num [1:6878] 0 1 0 0 0 1 0 1 2 0 ...

$ IDIntercept_L1 : num [1:6878] 1 1 2 2 3 3 4 4 4 5 ...

$ IDtime_L1 : num [1:6878] 501 501 502 502 503 503 504 504 504 505 ...

$ WIntercept_L1 : num [1:6878] 1 1 1 1 1 1 1 1 1 1 ...

$ Wtime_L1 : num [1:6878] 0 1 0 1 0 1 0 1 2 0 ...

$ usre1 : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ wsre1 : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ Y_L1 : num [1:6878] 1.865 1.824 -0.196 -2.188 2.295 ...

$ SRE_L1_S11 : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ y1..coxph : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ E..coxph : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ expand1..coxph : int [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ baseline1.hazard : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ baseline1.hazard.idx : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ baseline1.hazard.time : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ baseline1.hazard.length: num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ Intercept_S1 : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ SRE_L1_S1 : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ id : int [1:6878] NA NA NA NA NA NA NA NA NA NA ...

$ baseline1.hazard.values: num [1:16] 0 0.325 0.651 0.976 1.301 ...

$ Yjoint :List of 3

..$ Y_L1 : num [1:6878] 1.865 1.824 -0.196 -2.188 2.295 ...

..$ SRE_L1_S11: num [1:6878] NA NA NA NA NA NA NA NA NA NA ...

..$ y1..coxph : num [1:6878] NA NA NA NA NA NA NA NA NA NA ...We can see the outcome now is a list of 3 outcomes where the second one is there to compute the linear combination of parameters to share from the longitudinal to the survival model. We can have a look at the data structure for the first individual, starting with the longitudinal components:

id1 <- which(M_jmSRE$.args$data$IDIntercept_L1==1 | M_jmSRE$.args$data$expand1..coxph==1)

sapply(M_jmSRE$.args$data[c(1:6,8,11)], function(x) round(x[id1], 2)) Intercept_L1 time_L1 trt_L1 time.X.trt_L1 IDIntercept_L1 IDtime_L1 Wtime_L1 Y_L1

[1,] 1 0 1 0 1 501 0.00 1.86

[2,] 1 1 1 1 1 501 1.00 1.82

[3,] NA NA NA NA 1 501 0.16 NA

[4,] NA NA NA NA 1 501 0.49 NA

[5,] NA NA NA NA 1 501 0.81 NA

[6,] NA NA NA NA 1 501 1.14 NA

[7,] NA NA NA NA NA NA NA NA

[8,] NA NA NA NA NA NA NA NA

[9,] NA NA NA NA NA NA NA NA

[10,] NA NA NA NA NA NA NA NAWe can see that the first 2 lines correspond to the longitudinal submodel while the next 4 lines are used to compute the linear combination of random effects, these lines only includes the id of the random effects and the weight of the random slope (note that the weight corresponds to the middle of the corresponding survival interval where the linear combination of parameters is shared), other fixed effects are not shared and thus set to NA on these lines. We can also have a look at the survival part:

sapply(M_jmSRE$.args$data[c(14,16,8,9,21,13)], function(x) round(x[id1], 2)) E..coxph baseline1.hazard Wtime_L1 usre1 SRE_L1_S1 y1..coxph

[1,] NA NA 0.00 NA NA NA

[2,] NA NA 1.00 NA NA NA

[3,] NA NA 0.16 1 NA NA

[4,] NA NA 0.49 2 NA NA

[5,] NA NA 0.81 3 NA NA

[6,] NA NA 1.14 4 NA NA

[7,] 0.33 0.00 NA NA 1 0

[8,] 0.33 0.33 NA NA 2 0

[9,] 0.33 0.65 NA NA 3 0

[10,] 0.21 0.98 NA NA 4 1The column usre1 and SRE_L1_S1 are used to link the linear combination of parameters to share and the survival part. For example we can see from line 3 that the linear combination of the random effects is computed at time ~0.16, which is the middle of the first interval of the survival submodel which starts at time 0 and ends at time 0.33 (line 7). We therefore assume this interval is associated to the linear combination of the random effects computed at the middle of the interval (more intervals then leads to a more detailed representation of the longitudinal marker in the survival process). The formula to fit this model is also more involved with R-INLA, since we need to indicate that we want to compute the linear combination of random effects, which is done as follows:

cat(attr(terms(M_jmSRE$.args$formula), which = "term.labels")[9])f(usre1, wsre1, model = "iid", hyper = list(prec = list(initial = -6, fixed = TRUE)), constr = F)This is required to create a specific outcome (with value 0) that acts as “fake” Gaussian observations where the linear combination of parameters is computed at specified time points. Then we need to indicate that we want to share this linear combination of parameters in the survival submodel:

cat(attr(terms(M_jmSRE$.args$formula), which = "term.labels")[10])f(SRE_L1_S1, copy = "usre1", hyper = list(beta = list(fixed = FALSE, param = c(0, 0.01), initial = 0.1)))Of note, when functions of time are included in the longitudinal model, it is important to provide the function as presented in Section 3.2.5 because the shared part needs to be computed at many time points internally (i.e., the value of the shared component is computed at the middle of each time interval for each individual) to properly account for its evolution over time in the risk function.

We can have a look at the summary of our model fitted with INLAjoint:

summary(M_jmSRE, sdcor=TRUE)Longitudinal outcome (gaussian)

mean sd 0.025quant 0.5quant 0.975quant

Intercept_L1 0.9895 0.0647 0.8626 0.9895 1.1166

time_L1 -1.0640 0.0581 -1.1773 -1.0642 -0.9495

trt_L1 1.0311 0.0901 0.8543 1.0311 1.2078

time:trt_L1 0.4990 0.0739 0.3540 0.4990 0.6440

Res. err. (sd) 0.5161 0.0205 0.4779 0.5153 0.5585

Random effects standard deviation / correlation (L1)

mean sd 0.025quant 0.5quant 0.975quant

Intercept_L1 0.9898 0.0407 0.9155 0.9871 1.0757

time_L1 0.6865 0.0397 0.6117 0.6847 0.7690

Intercept_L1:time_L1 0.5590 0.0673 0.4183 0.5626 0.6807

Survival outcome

mean sd 0.025quant 0.5quant 0.975quant

Baseline risk (variance)_S1 0.7106 0.1485 0.4697 0.693 1.0517

Association longitudinal - survival

mean sd 0.025quant 0.5quant 0.975quant

SRE_L1_S1 0.4728 0.0419 0.3916 0.4723 0.5566

log marginal-likelihood (integration) log marginal-likelihood (Gaussian)

-14039.36 -14035.34

Deviance Information Criterion: 4366.229

Widely applicable Bayesian information criterion: 4328.681

Computation time: 5.57 secondsIn this model, the association is defined by a single coefficient, SRE_L1_S1, which quantifies the association between the subject’s current deviation from the population-average trajectory and his risk of event. This structure is different compared to Section 4.3.1 as it assumes that the deviation from the mean trajectory, rather than its separate components (intercept and slope), is what drives the risk.

This means that the impact of an individual’s latent characteristics on their risk of event changes over time. For example, consider an individual with a high positive random intercept (\(b_{i0} > 0\)) but a strong negative random slope (\(b_{i1}\) < 0). At early time points, his deviation from the mean is positive, leading to an increased hazard (assuming \(\gamma=\) 0.47). However, as time progresses, his trajectory crosses below the population average, and the term (\(b_{0i}+b_{1i}t\)) becomes negative, leading to a decreased hazard compared to population average. This structure allows the model to capture how an individual’s risk profile evolves as his biomarker deviates from the average over the follow-up.

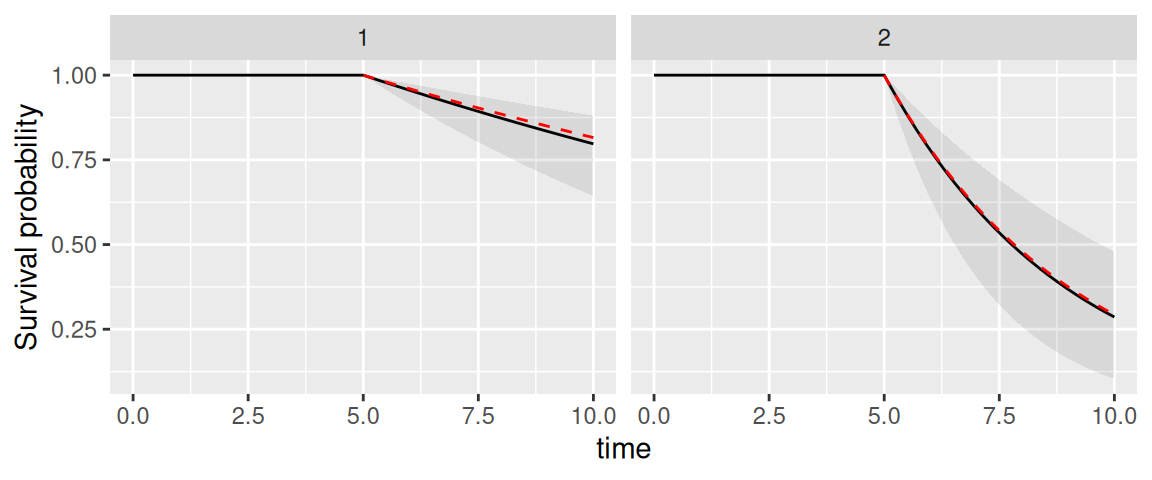

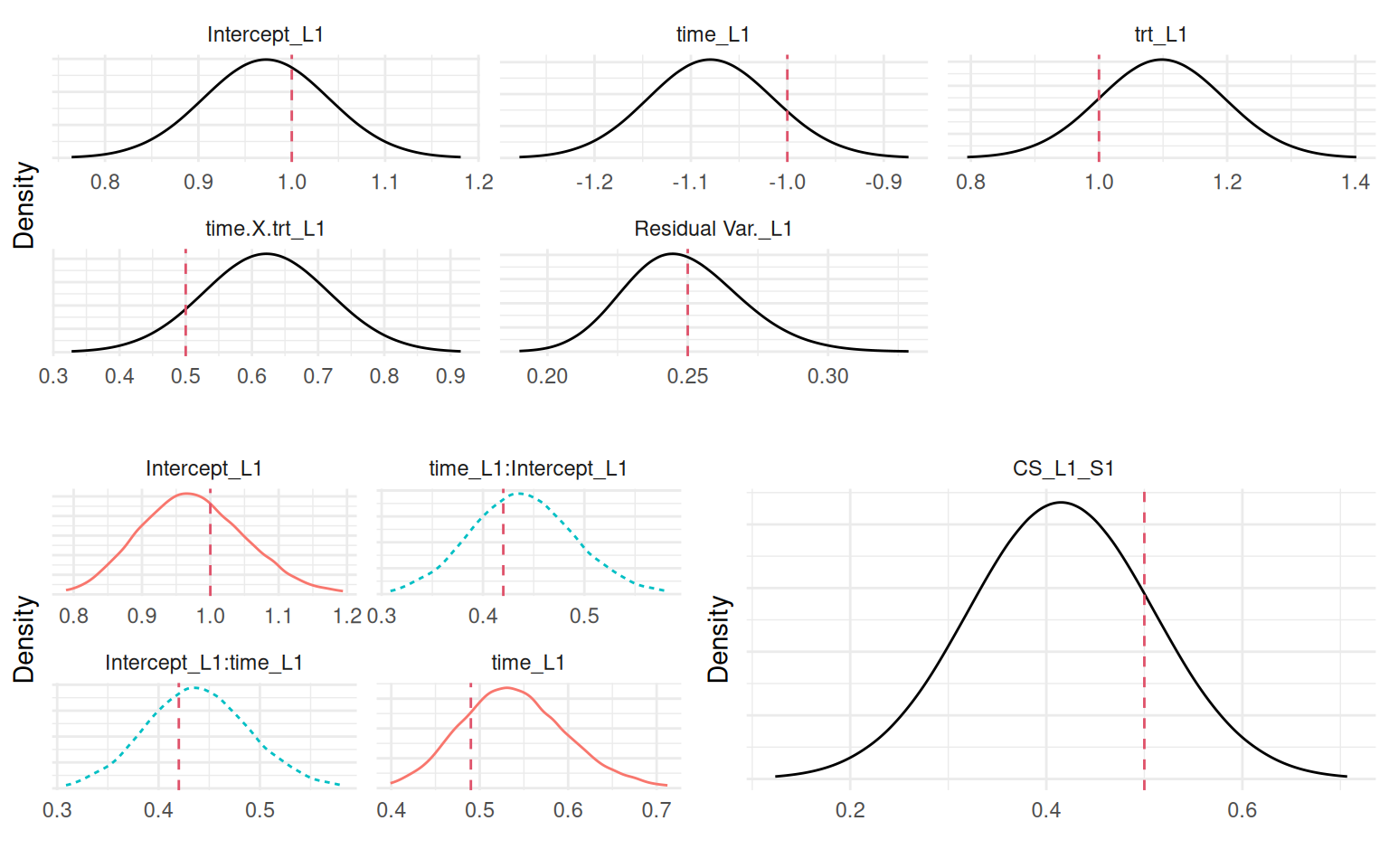

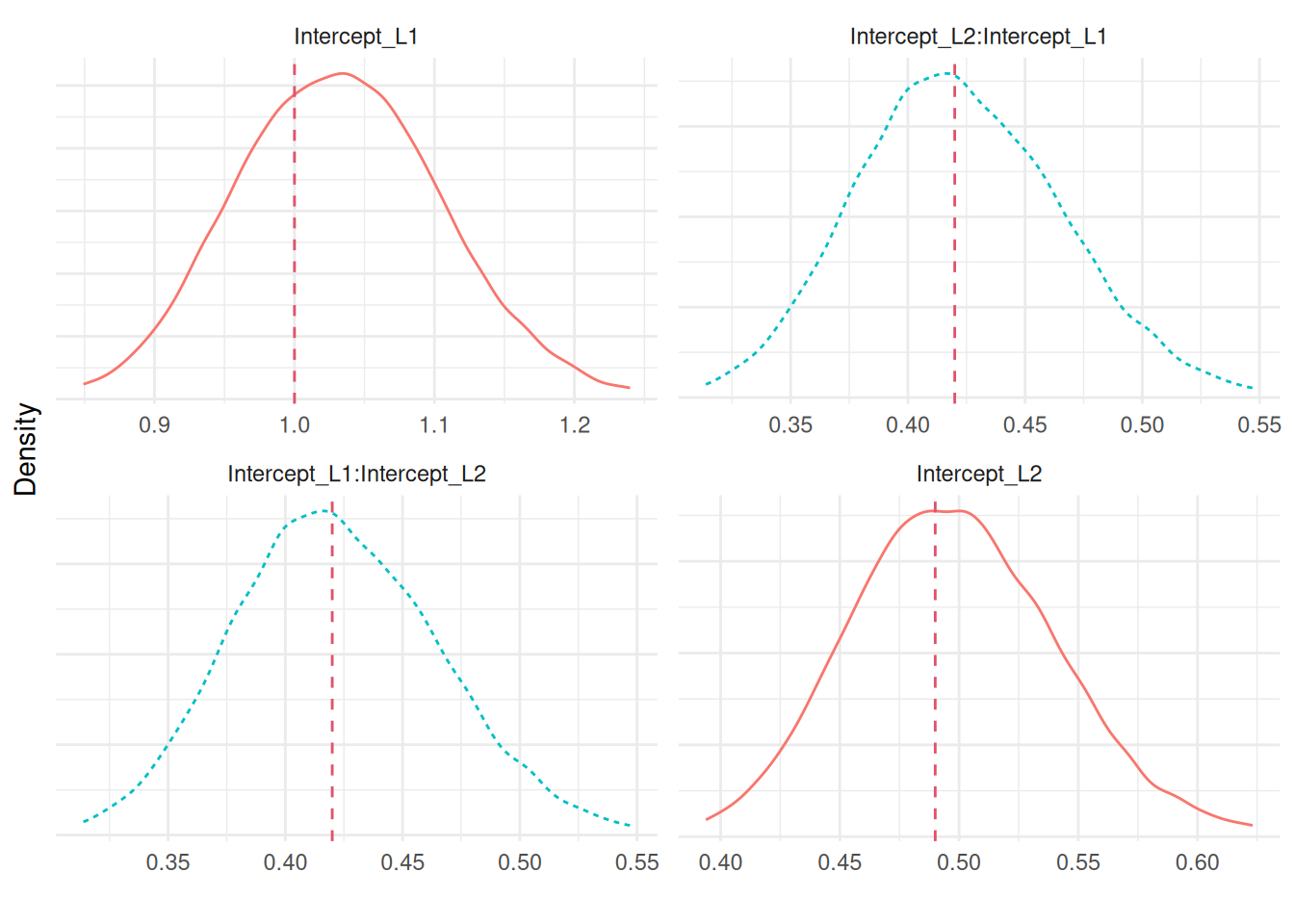

We can plot the posterior marginals of all parameters as shown in Figure 4.6:

Plots_jm <- plot(M_jmSRE)

ggarrange(eval(parse(text=P1)),

ggarrange(eval(parse(text=P2)),

eval(parse(text=P3)), ncol=2), nrow=2)

We can see that the model is able to recover the true values of all the parameters. Since we simulated event times with a permutation algorithm, the true baseline hazard function is assumed unknown and the default random walk is used. We can also predict from this model, let’s for example consider the first two individuals of the dataset used to fit the model and predict at horizon 5:

NewData <- datJM2_L[datJM2_L$id %in% 1:2,]

P <- predict(M_jmSRE, NewData, horizon=5, survival=TRUE)

P_1 <- ggplot(P$PredL, aes(x = time, y = quant0.5)) + geom_line() +

geom_ribbon(aes(ymin=quant0.025, ymax=quant0.975), alpha=0.1) +

geom_point(data=NewData, aes(x=time, y=Y)) + ylab("Y") +

theme(legend.position="none") + facet_wrap(~id)

P_2 <- ggplot(P$PredS, aes(x = time, y = Surv_quant0.5)) + geom_line() +

geom_ribbon(aes(ymin=Surv_quant0.025, ymax=Surv_quant0.975),alpha=0.1) +

theme(legend.position="none") + ylab("Survival probability") +

facet_wrap(~id)

ggarrange(P_1,P_2, nrow=2)

We can see how the uncertainty of the prediction for both outcomes depends on the amount of data provided.

4.3.3 Current value association structure

The current value association structure is similar to the previous one but fixed effects are shared in addition to the random effects, such that the risk of the event depends on the expected value of the linear predictor from the longitudinal submodel, sometimes refered to as the “true” value of the marker since it does not include the residual error (which could be noise coming from the tools that produce the measurement for example). The model is defined as follows: \[\begin{equation*} \left\{ \begin{array}{rl} \textrm{E}[Y_{i}(t)]&= \eta_i(t)\\ &= \beta_0 + b_{i0} + (\beta_1 + b_{i1})\cdot t + \beta_2\cdot trt_i + \beta_3\cdot trt_i\cdot t\\ \lambda_i(t)&=\lambda_0(t)\exp\left(\gamma \cdot \eta_i(t)\right) \end{array} \right. \end{equation*}\] Where the risk of event \(\lambda\) for individual \(i\) at time \(t\) depends on the linear predictor \(\eta\) for individual \(i\) at time \(t\) through the scaling parameter \(\gamma\). The association parameter is interpreted as the log-hazard ratio for a one-unit increase in this true biomarker value. Unlike the SRE structure, the CV structure incorporates the influence of all covariates (e.g., treatment) on the longitudinal marker directly into the risk of event calculation.

We can simulate data from this model with the same strategy as for the previous section, where we first compute the time-dependent component to share (here, the linear predictor without the noise), an we can generate survival times using the permutation algorithm.

CV_i <- b_0 + b12 + (b_1 + b22)*time2 + b_2*trt2 + b_3*trt2*time2

DatTmp <- permalgorithm(n, n_i2, Xmat=matrix(CV_i, ncol=1), betas=c(s_1))

DatTmp2 <- DatTmp[which(!duplicated(DatTmp$Id, fromLast = T)),

c("Id","Event","Stop")]

datJM3_S <- data.frame(id = 1:n, eventTimes = mestime2[DatTmp2$Stop+1],

eventIndic = DatTmp2$Event, trt = trt)

datJM3_L <- datJM_Lt[-unlist(sapply(1:n, function(x)

which(datJM_Lt$time>=datJM3_S$eventTimes[datJM3_S$id==x] &

datJM_Lt$id==x))),]And we can fit the model with the following call, where the association parameter is assoc="CV", which stands for “current value” (of the linear predictor, sometimes referred to as “current level”):

M_jmCV <- joint(formSurv = inla.surv(eventTimes, eventIndic) ~ -1,

formLong = Y ~ time * trt + (1 + time | id),

dataSurv = datJM3_S, dataLong=datJM3_L,

id="id", timeVar="time", assoc="CV")

summary(M_jmCV, sdcor=TRUE)Longitudinal outcome (gaussian)

mean sd 0.025quant 0.5quant 0.975quant

Intercept_L1 0.9637 0.0674 0.8315 0.9636 1.0959

time_L1 -0.9974 0.0610 -1.1162 -0.9977 -0.8767

trt_L1 1.1156 0.0983 0.9227 1.1156 1.3084

time:trt_L1 0.4466 0.0936 0.2632 0.4466 0.6306

Res. err. (sd) 0.5294 0.0210 0.4899 0.5287 0.5724

Random effects standard deviation / correlation (L1)

mean sd 0.025quant 0.5quant 0.975quant

Intercept_L1 0.9778 0.0418 0.8974 0.9774 1.0590

time_L1 0.6877 0.0390 0.6167 0.6872 0.7675

Intercept_L1:time_L1 0.5992 0.0672 0.4564 0.6035 0.7132

Survival outcome

mean sd 0.025quant 0.5quant 0.975quant

Baseline risk (variance)_S1 0.7714 0.1377 0.5402 0.7578 1.0803

Association longitudinal - survival

mean sd 0.025quant 0.5quant 0.975quant

CV_L1_S1 0.492 0.0429 0.4083 0.4918 0.5771

log marginal-likelihood (integration) log marginal-likelihood (Gaussian)

-13745.92 -13741.90

Deviance Information Criterion: 4227.396

Widely applicable Bayesian information criterion: 4189.455

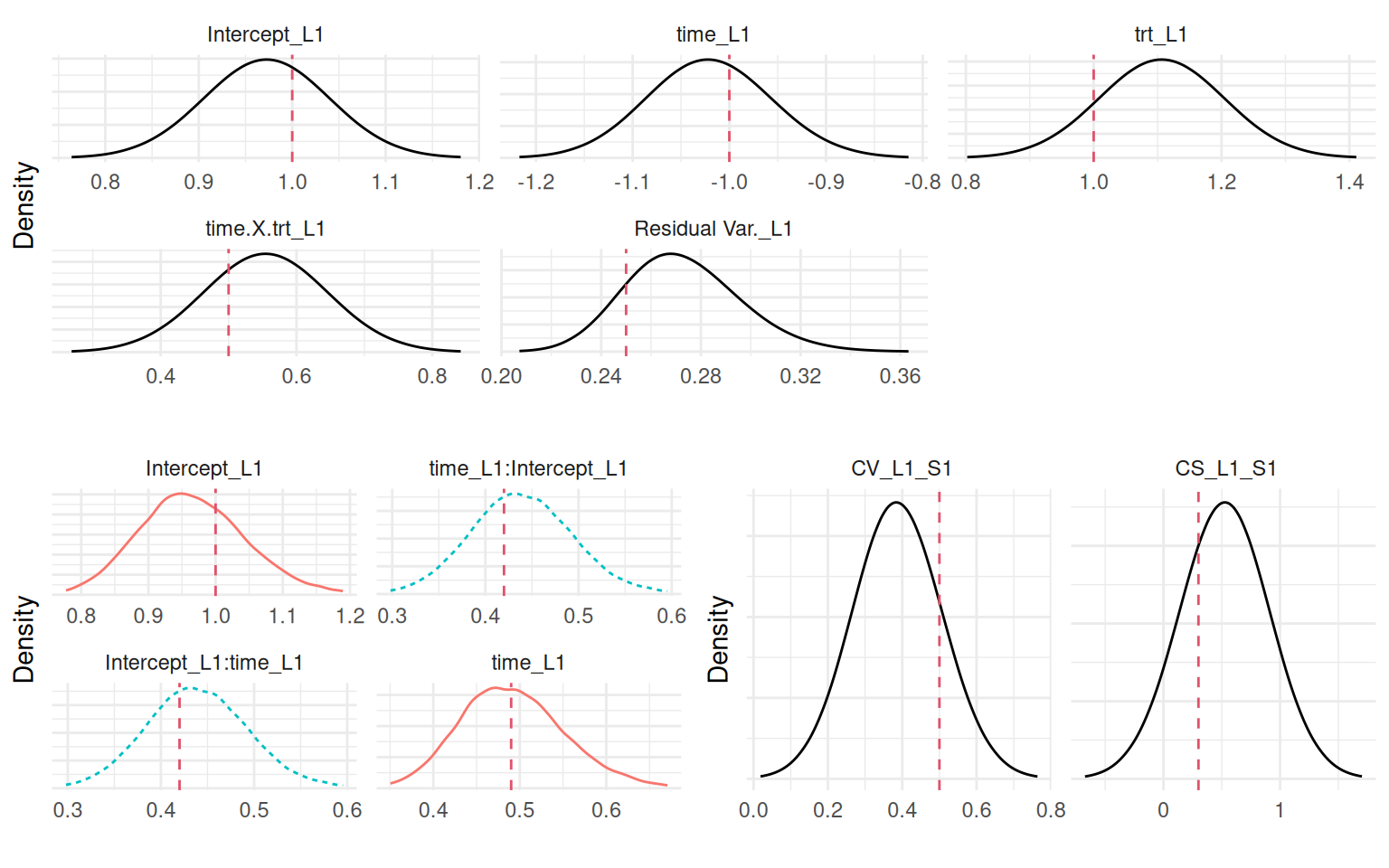

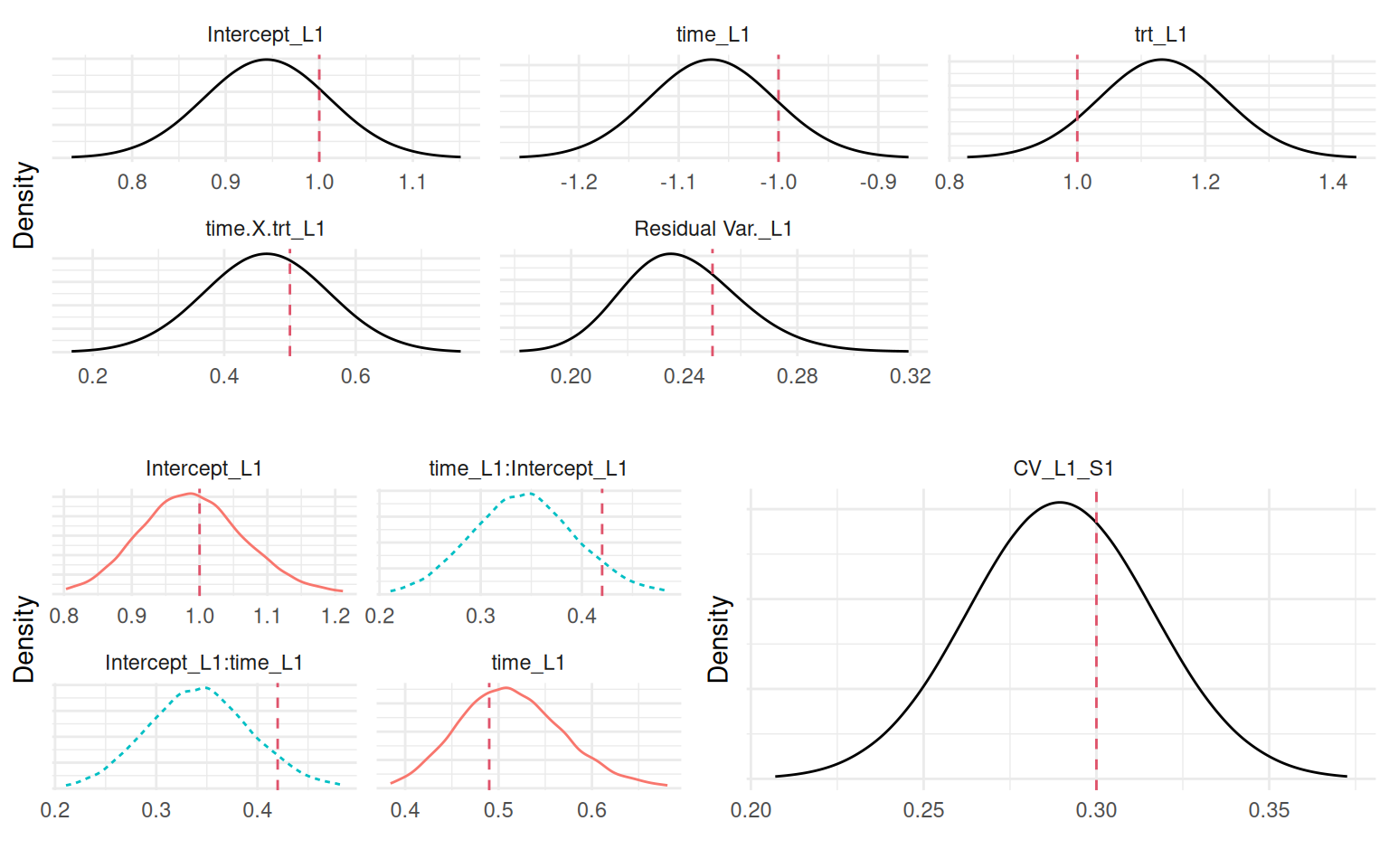

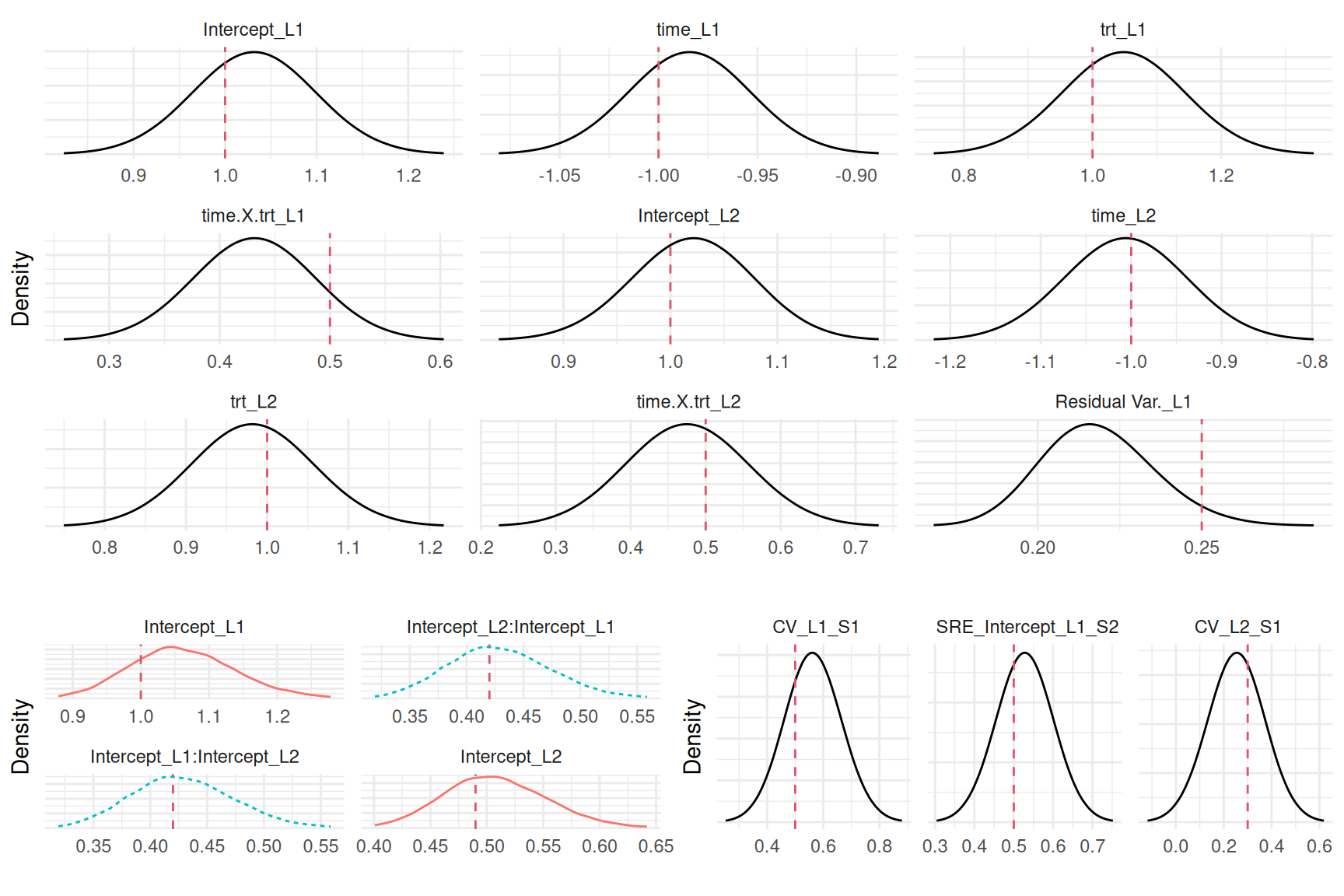

Computation time: 5.73 secondsNow the association is CV_L1_S1 for the effect of the current value of the first longitudinal submodel effect on the risk of the first survival outcome. We can plot the posterior marginals:

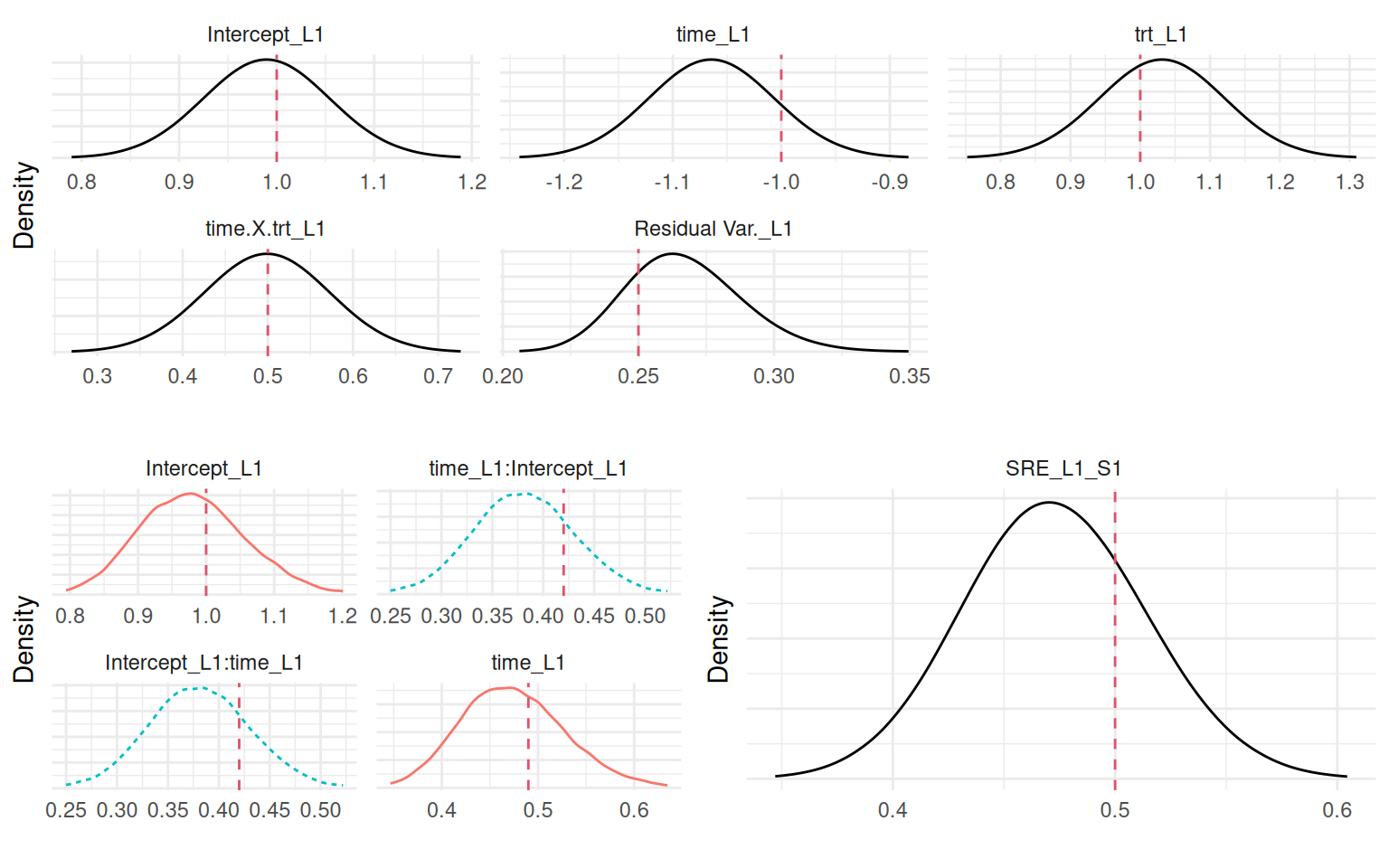

Plots_jm <- plot(M_jmCV)

ggarrange(eval(parse(text=P1)),

ggarrange(eval(parse(text=P2)),

eval(parse(text=P3)), ncol=2), nrow=2)

Of note, the current value (CV) and shared random effects (SRE) associations are equivalent in the absence of covariates in the longitudinal part of the model and when the covariates are also included in the survival part, because in these cases the hazard is simply shifted by a constant.

4.3.4 Current slope association structure

While the previous association assume that the risk of event at time \(t\) depends on the value of the outcome at the same time \(t\), we may be more interested in the dynamic behavior of the marker. The derivative of the linear predictor with respect to time, describes some aspect of the evolution of the marker. It corresponds to the trend (or the rate of change) at time \(t\), where a positive value means the marker is increasing while a negative means it decreases. It is sometimes of interest to assume that the risk of event depends on the trend of the marker at time \(t\), rather than its value. The association parameter is then the log-hazard ratio for a one-unit increase in this rate of change. Accounting for the current slope of the biomarker provides a different perspective on the risk, allowing the model to distinguish between individuals who may have the same marker level but different underlying trends.

For instance, if two patients have a biomarker value of 10, but one’s marker is rapidly increasing while the other’s is decreasing, the CS structure can estimate if the former has a higher risk, independent of their current value. We can define the following model: \[\begin{equation*} \left\{ \begin{array}{rl} \textrm{E}[Y_{i}(t)]&= \eta_i(t)\\ &= \beta_0 + b_{i0} + (\beta_1 + b_{i1})\cdot t + \beta_2\cdot trt_i + \beta_3\cdot trt_i\cdot t \\ \lambda_i(t)&=\lambda_0(t)\exp\left(\gamma\cdot \left(\frac{\mathrm{d}\eta_i(t)}{\mathrm{d}t}\right)\right) \end{array} \right. \end{equation*}\] Where the derivative of the linear predictor from the longitudinal submodel is shared and scaled with parameter \(\gamma\). Given the simplicity of the proposed model, our derivative only includes the fixed effect of time, the random slope as well as the treatment effect over time.

For more complex longitudinal models, such as described in Section 3.2.5, where splines are used to describe the evolution of the marker over time, the current slope parametrization in INLAjoint is computing the derivative of the linear predictor numerically using the R package NumDeriv (Gilbert & Varadhan (2019)), which requires to setup any function of time as described in Section 3.2.5. We can simulate data from the same random intercept-slope model as in previous sections:

CS_i <- (b_1 + b22) + b_3*trt2

DatTmp <- permalgorithm(n, n_i2, Xmat=matrix(CS_i, ncol=1), betas=c(s_1))

DatTmp2 <- DatTmp[which(!duplicated(DatTmp$Id, fromLast = T)),

c("Id","Event","Stop")]

datJM4_S <- data.frame(id = 1:n, eventTimes = mestime2[DatTmp2$Stop+1],

eventIndic = DatTmp2$Event, trt = trt)

datJM4_L <- datJM_Lt[-unlist(sapply(1:n, function(x)

which(datJM_Lt$time>=datJM4_S$eventTimes[datJM4_S$id==x] &

datJM_Lt$id==x))),]And we can fit the model by simply setting the argument assoc="CS", which stands for “current slope” (of the linear predictor):

M_jmCS <- joint(formSurv = inla.surv(eventTimes, eventIndic) ~ -1,

formLong = Y ~ time * trt + (1 + time | id),

dataSurv = datJM4_S, dataLong=datJM4_L,

id="id", timeVar="time", assoc="CS")Internally the data has the same structure as with assoc="CV", except that now only fixed and random effects that interacts with time or functions of time are contributing to the shared part. We can directly plot the posterior marginals to compare with true values used for data generation:

Plots_jm <- plot(M_jmCS)

ggarrange(eval(parse(text=P1)),

ggarrange(eval(parse(text=P2)),

eval(parse(text=P3)), ncol=2), nrow=2)

Again, the model is able to capture all the parameters posterior from the data information.

4.3.5 Current value and current slope association structure

We may be interested in both the effect of the value of the marker as well as its rate of change on the risk of an event. This can be done with the association assoc="CV_CS", which simply combines the two previous options. The corresponding model is: \[\begin{equation*}

\left\{ \begin{array}{rl}

\textrm{E}[Y_{i}(t)]&= \eta_i(t)\\

&= \beta_0 + b_{i0} + (\beta_1 + b_{i1})\cdot t + \beta_2\cdot trt_i + \beta_3\cdot trt_i\cdot t \\

\lambda_i(t)&=\lambda_0(t)\exp\left(\gamma_1\cdot \eta_i(t) + \gamma_2\cdot \left(\frac{\mathrm{d}\eta_i(t)}{\mathrm{d}t}\right)\right)

\end{array}

\right.

\end{equation*}\] This model includes two association parameters allowing for the simultaneous evaluation of both the marker’s level and its trend effect on the risk of event. This model can evaluate if a high biomarker value is increasing the risk in itself and whether an increase in the biomarker confers an additional risk, even after accounting for its current value. This structure is useful when both the state and the dynamics of a disease process are thought to be prognostic. We can simulate data from this model using the permutation algorithm, where the event times are conditional on two time-dependent components:

DatTmp <- permalgorithm(n, n_i2,

Xmat=matrix(c(CV_i, CS_i), ncol=2),

betas=c(s_1, s_2)) # association

DatTmp2 <- DatTmp[which(!duplicated(DatTmp$Id, fromLast = T)),

c("Id","Event","Stop")]

datJM5_S <- data.frame(id = 1:n, eventTimes = mestime2[DatTmp2$Stop+1],

eventIndic = DatTmp2$Event, trt = trt)

datJM5_L <- datJM_Lt[-unlist(sapply(1:n, function(x)

which(datJM_Lt$time>=datJM5_S$eventTimes[datJM5_S$id==x] &

datJM_Lt$id==x))),]Then we can fit the model:

M_jmCVCS <- joint(formSurv = inla.surv(eventTimes, eventIndic) ~ -1,

formLong = Y ~ time * trt + (1 + time | id),

dataSurv = datJM5_S, dataLong=datJM5_L,

id="id", timeVar="time", assoc="CV_CS")and we can have a look at the results:

Plots_jm <- plot(M_jmCVCS)

ggarrange(eval(parse(text=P1)),

ggarrange(eval(parse(text=P2)),

eval(parse(text=P3)), ncol=2), nrow=2)

4.3.6 Cumulative value with area under the curve association structure

So far we assumed associations where the hazard at time \(t\) depends on components from the longitudinal marker at time \(t\). For some applications, this does not sound appropriate, for example let’s consider the relationship between air pollution and the risk of lung cancer. The risk at time \(t\) could be explained by some accumulated exposure up to \(t\) but may not be related to the exposure at \(t\). In such context, we are interested in the cumulative effect of the marker on the risk of event at time \(t\). By integrating the longitudinal trajectory over time, this association structure captures the overall exposure to the longitudinal marker. Unlike simpler association structures that rely on current values or random effects, this approach considers the entire history of the longitudinal measurements up to a given time point. The cumulative value association structure is implemented by including the integral of the longitudinal trajectory as a time-dependent covariate in the survival submodel. Because of the decomposition of the follow-up in small intervals, we can compute the required integral directly with a linear combination of the parameters.

When sharing the current value of the linear predictor (see Section 4.3.3), the linear predictor of the longitudinal is computed for each interval of the follow-up of the survival, such that the weight of the shared component is a diagonal identity matrix (i.e., each computed value of the linear predictor corresponds to one interval of the survival submodel). We can take advantage of this structure and change this diagonal identity matrix to weight the linear predictor such that each interval of follow-up includes the linear predictor of previous intervals.

The model is defined as follows: \[\begin{equation*} \left\{ \begin{array}{rl} \textrm{E}[Y_{i}(t)]&= \eta_i(t)\\ &= \beta_0 + b_{i0} + (\beta_1 + b_{i1})\cdot t + \beta_2\cdot trt_i + \beta_3\cdot trt_i\cdot t \\ \lambda_i(t)&=\lambda_0(t)\exp\left(\gamma \cdot \int_{s=0}^{t}\eta_i(s)\mathcal{d}s\right) \end{array} \right. \end{equation*}\] We define the interval to start at time 0 here, such that the entire history of the marker affects the risk at time \(t\) but we could also assume a specific window of exposure, as illustrated hereafter.

Let’s start by simulating some data. First we compute cumulative values of the marker, reusing the linear predictor simulated earlier for simplicity. Then we use the permutation algorithm to simulate event times that depend on the time-dependent area under the curve of the biomarker.

CUM_CV_i <- rep(NA, length(CV_i))

for(i in 1:n){

CUM_CV_i[(n_i2*(i-1)+1):((n_i2*i))] <- cumsum(

c(0, CV_i[(n_i2*(i-1)+1):((n_i2*i)-1)])*gap2)

}

DatTmp <- permalgorithm(n, n_i2, Xmat=matrix(CUM_CV_i, ncol=1), betas=s_1)

DatTmp2 <- DatTmp[which(!duplicated(DatTmp$Id, fromLast = T)),

c("Id","Event","Stop")]

datJM6_S <- data.frame(id = 1:n, eventTimes = mestime2[DatTmp2$Stop+1],

eventIndic = DatTmp2$Event, trt = trt)

datJM6_L <- datJM_Lt[-unlist(sapply(1:n, function(x)

which(datJM_Lt$time>=datJM6_S$eventTimes[datJM6_S$id==x] &

datJM_Lt$id==x))),]Here, the risk at time \(t\) depends on the cumulative value of the marker since time \(0\). We start by defining the joint model with current value association structure, but we do not run the INLA algorithm yet by adding the argument run=FALSE (see Section 1.5.8):

M_jmCUM_CV0 <- joint(formSurv = inla.surv(eventTimes, eventIndic) ~ -1,

formLong = Y ~ time * trt + (1 + time | id),

dataSurv = datJM6_S, dataLong=datJM6_L,

id="id", timeVar="time", assoc="CV",

run=FALSE)Now we want to replace the weights for the shared current value, we first need to create a matrix of weights. We can extract from the object we just created the size of each follow-up interval in the survival part of the joint model: